KubeSphere 3.4.0 刚刚发布,支持了 K8s v1.26。本文率先体验一下。

前言

知识点

- 定级:入门级

- KubeKey 安装部署 KubeSphere 和 Kubernetes

- KubeKey 定制化部署集群

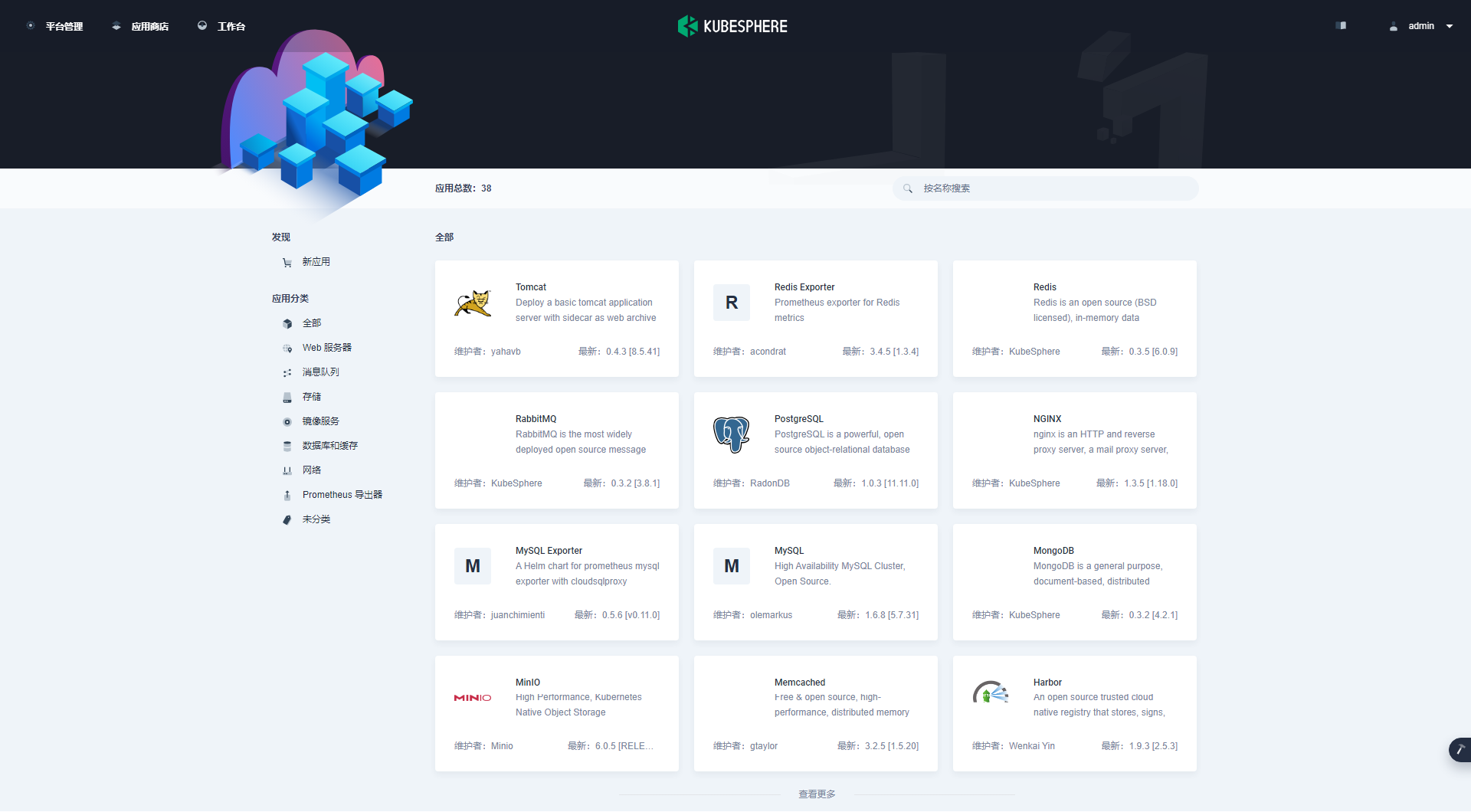

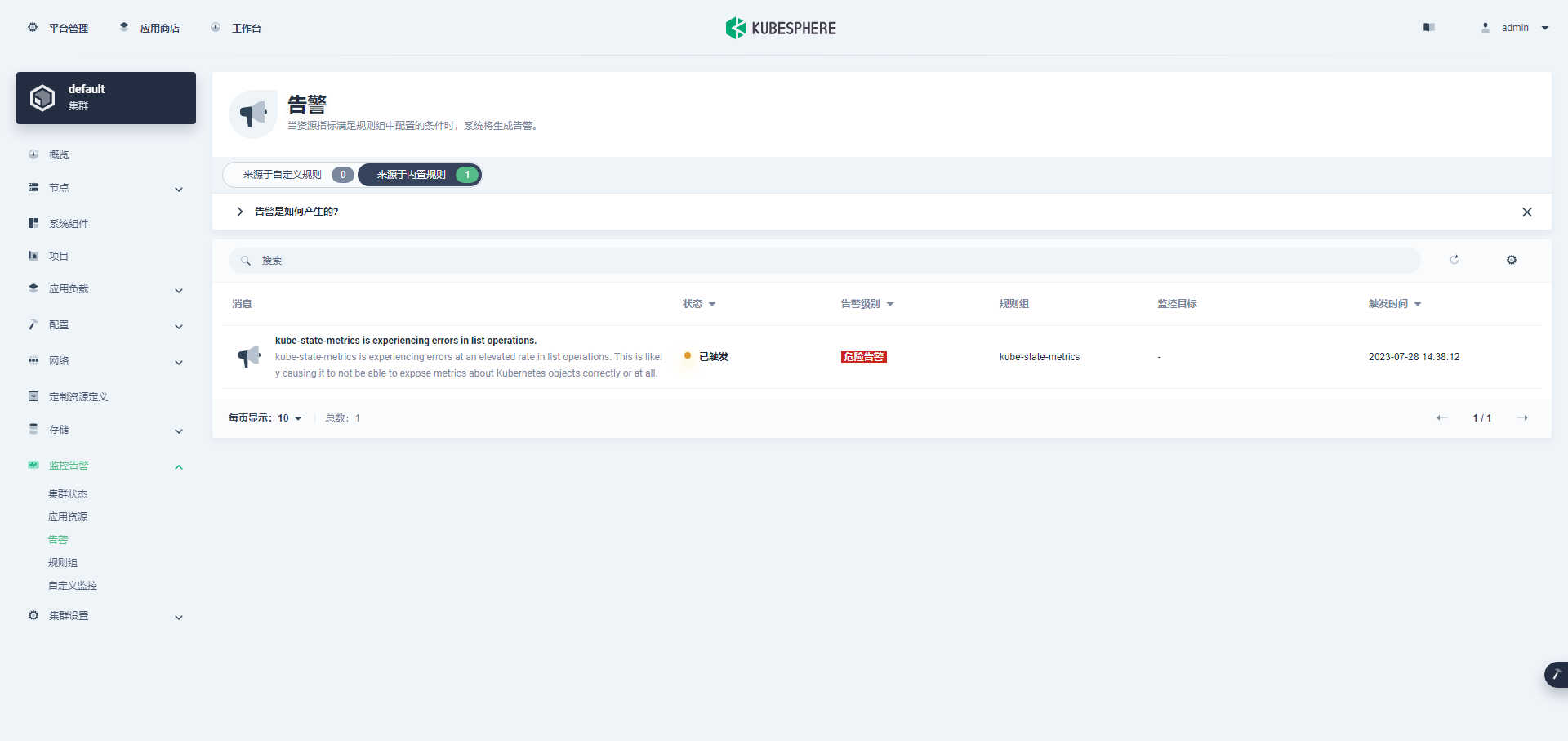

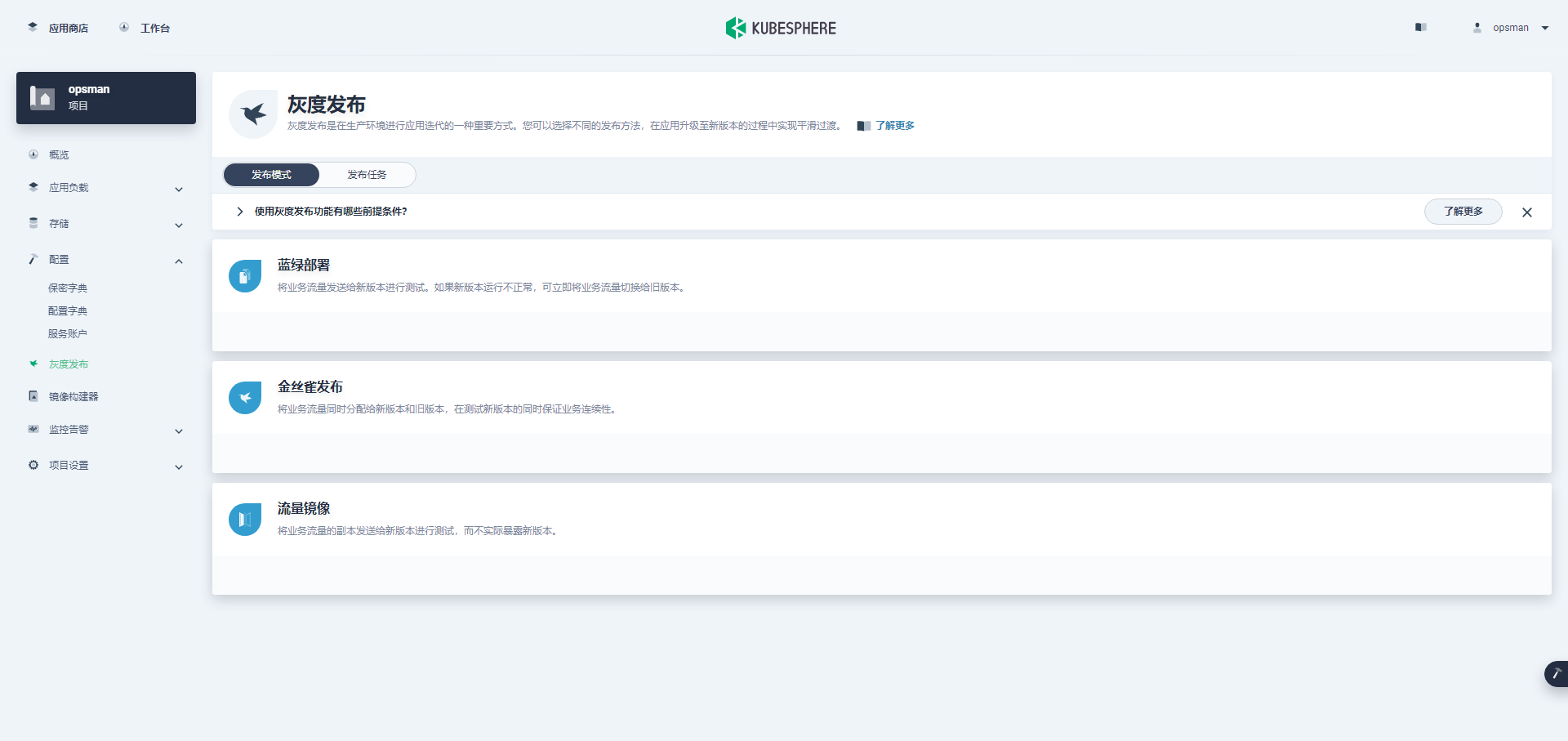

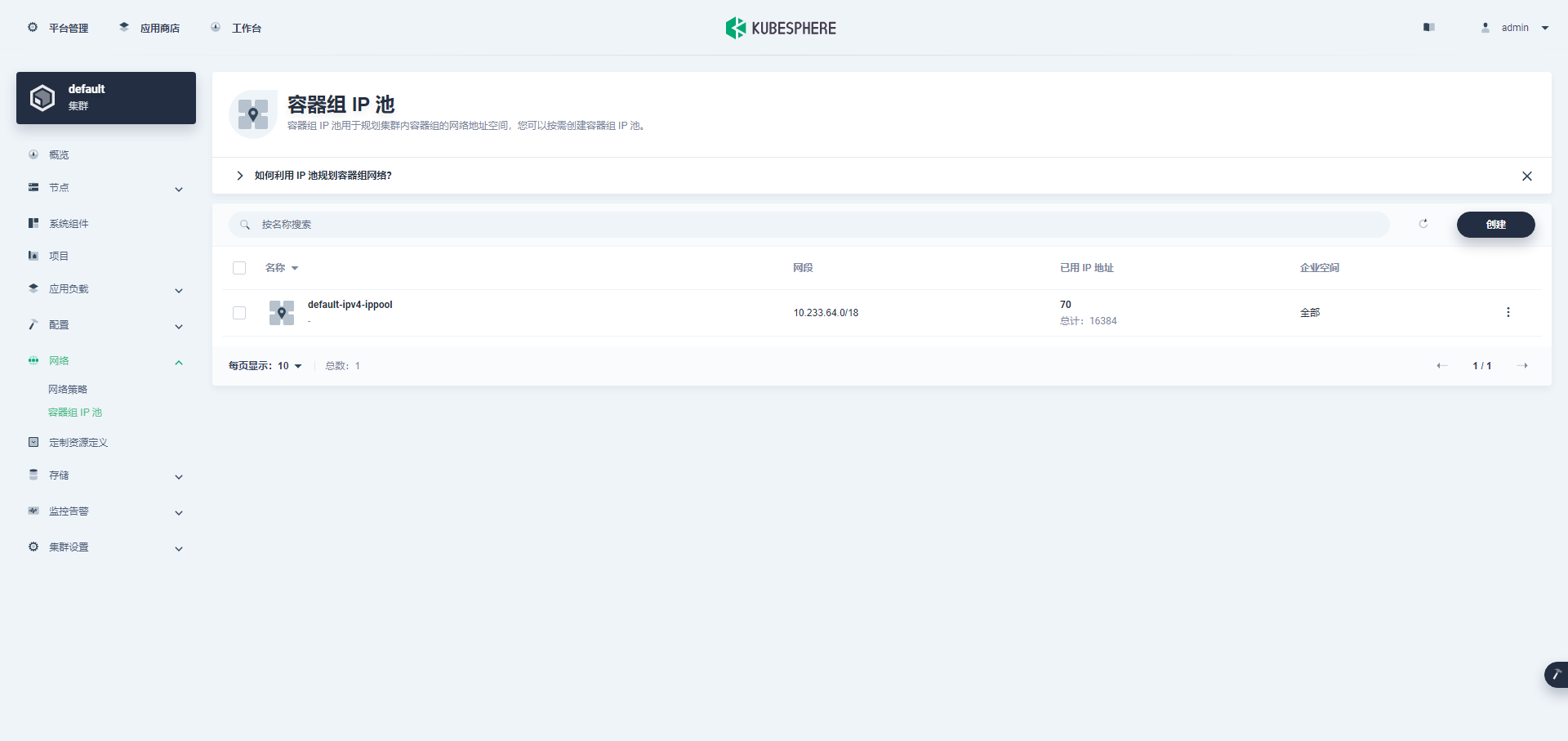

- KubeSphere v3.4.0 功能概览

- Kubernetes 基本操作

- CentOS 系统内核升级

- KubeSphere 插件启用

- KubeSphere 资源消耗分析

演示服务器配置

| 主机名 | IP | CPU | 内存 | 系统盘 | 数据盘 | 用途 |

|---|

| ks-master-1 | 192.168.9.61 | 4 | 8 | 50 | 0 | KubeSphere/k8s-master |

| ks-master-2 | 192.168.9.62 | 4 | 8 | 50 | 0 | KubeSphere/k8s-master |

| ks-master-3 | 192.168.9.63 | 4 | 8 | 50 | 0 | KubeSphere/k8s-master |

| ks-worker-1 | 192.168.9.65 | 8 | 16 | 50 | 0 | k8s-worker |

| ks-worker-2 | 192.168.9.66 | 8 | 16 | 50 | 0 | k8s-worker |

| ks-worker-3 | 192.168.9.67 | 8 | 16 | 50 | 0 | k8s-worker |

| 合计 | 6 | 36 | 72 | 300 | 0 | |

实战环境涉及软件版本信息

操作系统:CentOS 7.9 ×86_64

KubeSphere:v3.4.0

Kubernetes:v1.26.5

Containerd:1.6.4

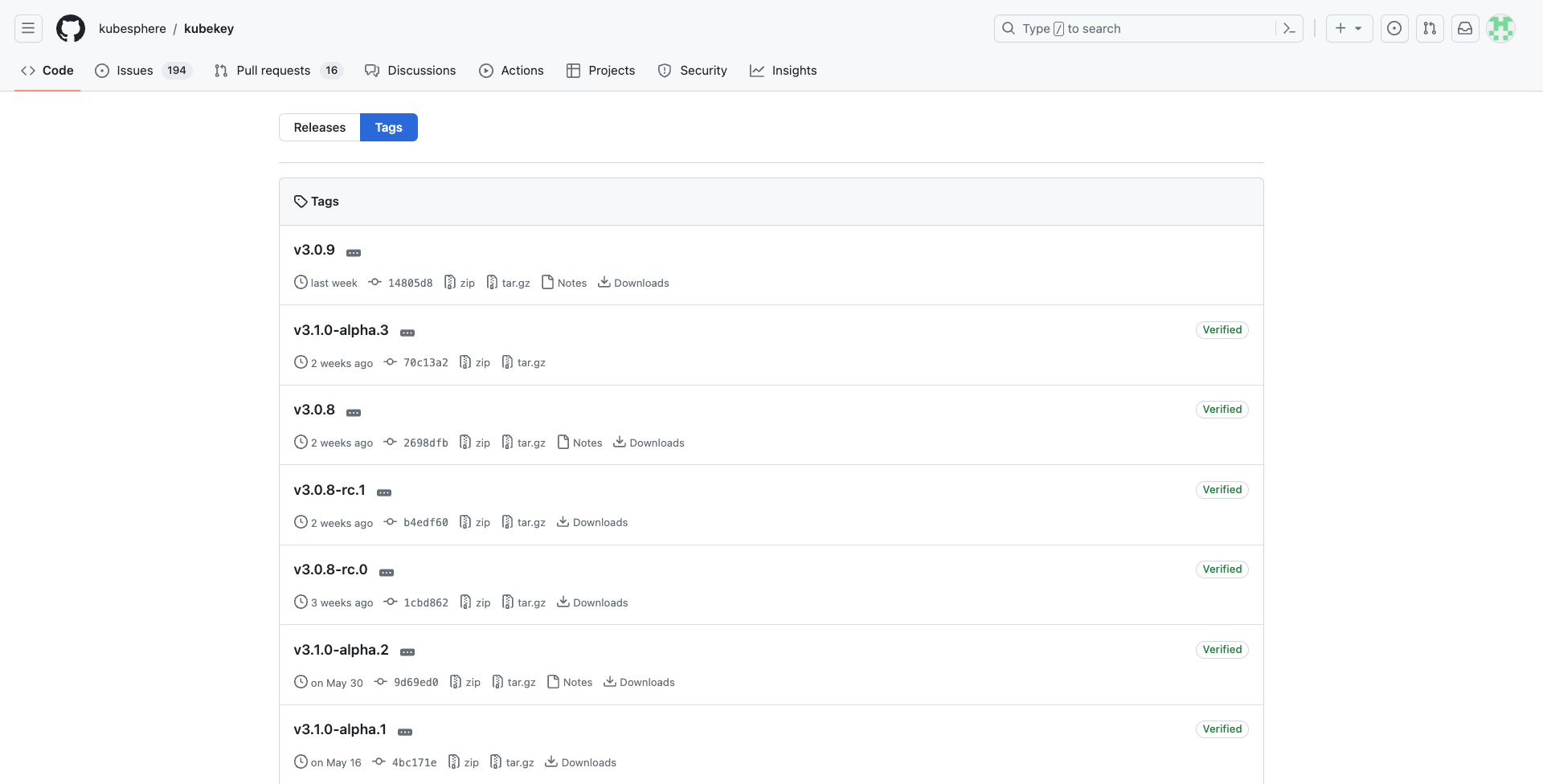

KubeKey: v3.0.9

本文简介

本文初始目标是要体验用 KubeKey v3.0.9 部署最新版的 KubeSphere v3.4.0 和 Kubernetes v1.27,无奈部署过程中发现了自认为的 Bug,进行不下去了,不得不改换 Kubernetes v1.26。

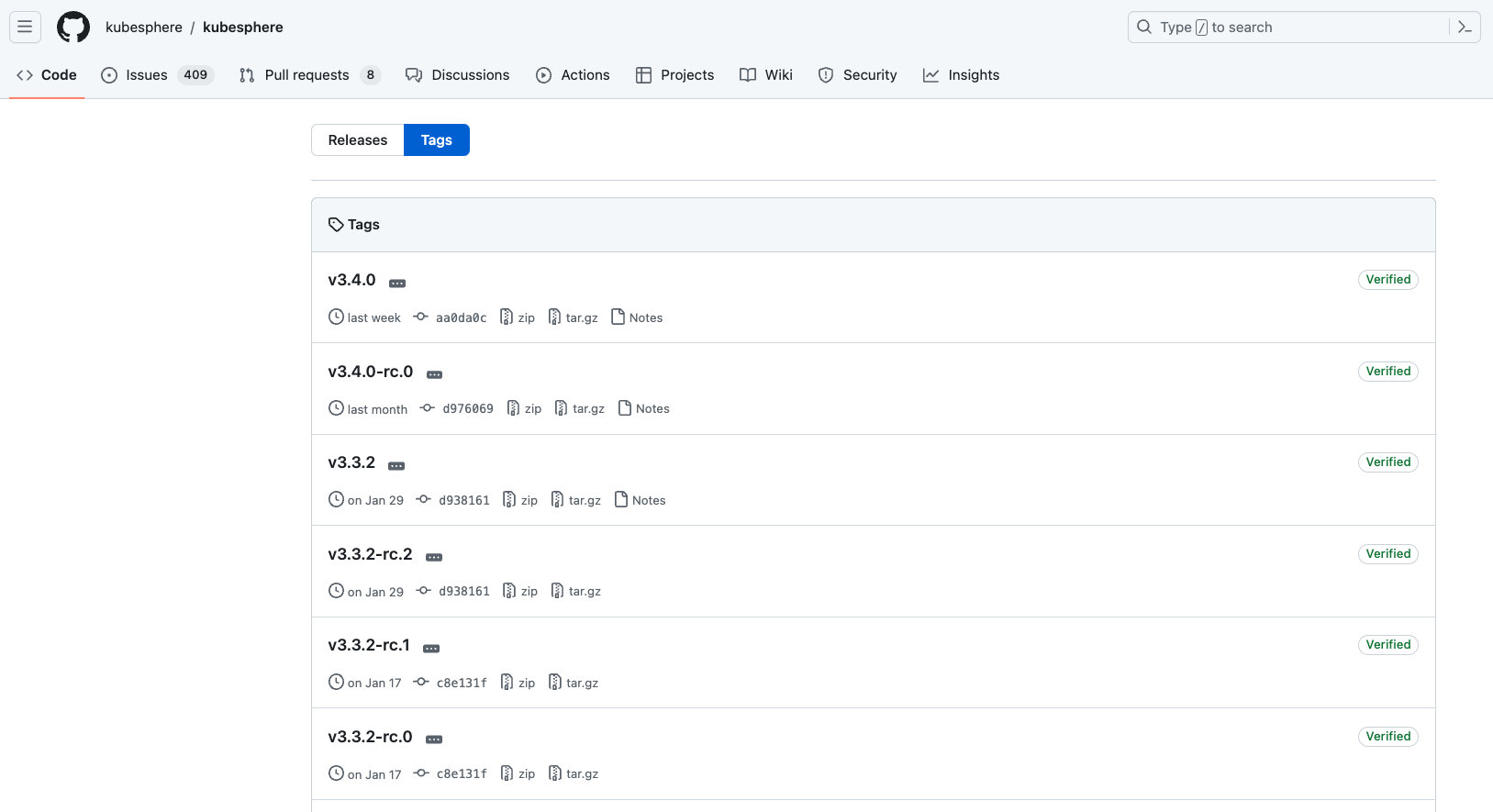

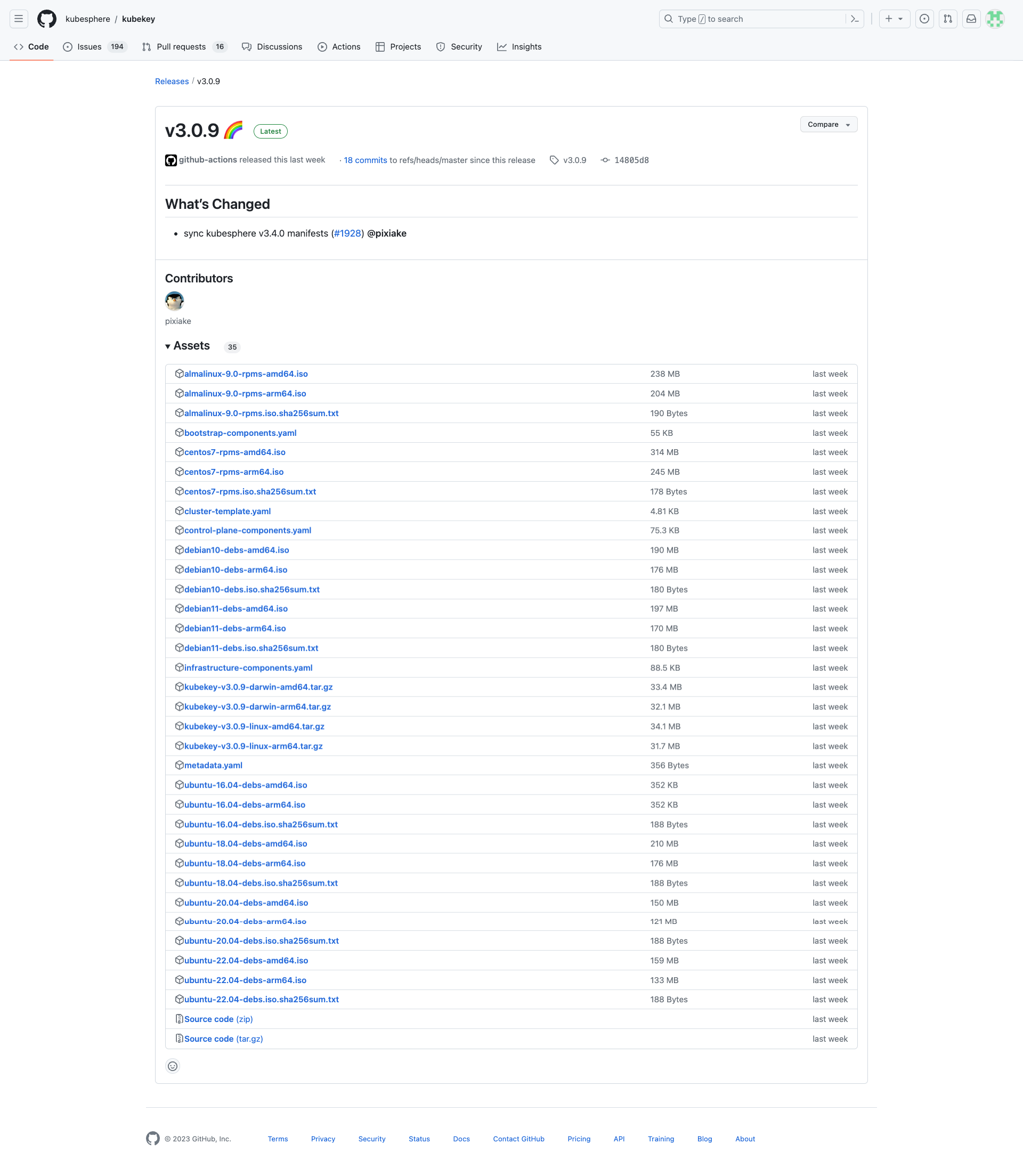

近期,KubeSphere 社区代码仓库发布了 KubeSphere v3.4.0,同时为了适配 KubeSphere v3.4.0, KubeKey 也在 v3.0.8 发布后一周紧急发布了 v3.0.9。

说明:相应的 Release 说明,内容有点多,不便于截图展示,请读者自己查看。

说明:v3.0.8 和 v3.0.9 发布间隔只有一周,且 Changed 只有一条。

所以,本文只是一个抢先体验版,最终结果以官方发布版为准。

本文技术含量不大,主要是体验新版本的安装过程以及安装完成后更个组件的表面效果,抢先体验过的小伙伴都纷纷吐槽说这个版本 Bug 真多,眼见为实,耳听为虚,我也来体验一下,事实到底如何?

我们将基于操作系统 CentOS 7.9 ,使用 KubeSphere 开发的 KubeKey 工具,模拟真实的小规模生产环境在 6 台服务器上采用高可用模式部署 Kubernetes v1.26 集群和 KubeSphere v3.4.0。

操作系统基础配置

为了演示新版本的大部分功能,本文选用了 3 Master 和 3 Worker 的部署架构,部署过程中启用了除 KubeEdge 之外的所有可插拔组件。

请注意,以下操作无特殊说明时需在所有 K8s 集群节点上执行。本文只选取 Master-1 节点作为演示,并假定其余服务器都已按照相同的方式进行配置和设置。

配置主机名

# 更改主机名

hostnamectl set-hostname ks-master-1

# 切入新的终端会话,验证主机名修改成功

bash

注意:worker 节点的主机名前缀是 ks-worker-。

配置 DNS

echo "nameserver 114.114.114.114" > /etc/resolv.conf

配置服务器时区

配置服务器时区为 Asia/Shanghai。

timedatectl set-timezone Asia/Shanghai

验证服务器时区,正确配置如下。

[root@ks-master-1 ~]# timedatectl

Local time: Thu 2023-07-27 15:31:10 CST

Universal time: Thu 2023-07-27 07:31:10 UTC

RTC time: Thu 2023-07-27 07:31:10

Time zone: Asia/Shanghai (CST, +0800)

NTP enabled: yes

NTP synchronized: yes

RTC in local TZ: no

DST active: n/a

小技巧:多个机器执行同一条命令的时候,可以用终端工具的多开功能,这样能提高效率。当然啊,也可以按我们第一季介绍的 Ansible 工具(这一季为了照顾纯运维小白,取消了,改手撸了)。

配置时间同步

安装 chrony 作为时间同步软件。

yum install chrony -y

修改配置文件 /etc/chrony.conf,修改 ntp 服务器配置。

vi /etc/chrony.conf

# 删除所有的 server 配置

server 0.centos.pool.ntp.org iburst

server 1.centos.pool.ntp.org iburst

server 2.centos.pool.ntp.org iburst

server 3.centos.pool.ntp.org iburst

# 增加国内的 ntp 服务器,或是指定其他常用的时间服务器

pool cn.pool.ntp.org iburst

重启并设置 chrony 服务开机自启动。

systemctl enable chronyd --now

验证 chrony 同步状态。

# 执行查看命令

chronyc sourcestats -v

# 正常的输出结果如下

[root@ks-master-1 ~]# chronyc sourcestats -v

210 Number of sources = 4

.- Number of sample points in measurement set.

/ .- Number of residual runs with same sign.

| / .- Length of measurement set (time).

| | / .- Est. clock freq error (ppm).

| | | / .- Est. error in freq.

| | | | / .- Est. offset.

| | | | | | On the -.

| | | | | | samples. \

| | | | | | |

Name/IP Address NP NR Span Frequency Freq Skew Offset Std Dev

==============================================================================

tock.ntp.infomaniak.ch 41 22 297m -0.227 0.504 -12ms 4011us

makaki.miuku.net 26 10 312m -0.014 0.497 +27ms 4083us

electrode.felixc.at 22 15 241m -0.399 1.350 -49ms 6966us

ntp5.flashdance.cx 38 14 315m +0.363 0.600 +27ms 4865us

关闭系统防火墙

systemctl stop firewalld && systemctl disable firewalld

禁用 SELinux

openEuler 22.03 SP2 最小化安装的系统默认启用了 SELINUX,为了减少麻烦,我们所有的节点都禁用 SELINUX。

# 使用 sed 修改配置文件,实现彻底的禁用

sed -i 's/^SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

# 使用命令,实现临时禁用,这一步其实不做也行,KubeKey 会自动配置

setenforce 0

安装系统依赖

在所有节点上,以 root 用户登陆系统,执行下面的命令为 Kubernetes 安装系统基本依赖包。

# 安装 Kubernetes 系统依赖包

yum install curl socat conntrack ebtables ipset ipvsadm -y

配置基于 SSH 密钥的身份验证

KubeKey 支持在自动化部署 KubeSphere 和 Kubernetes 服务时,利用密码和密钥作为远程服务器的连接验证方式。本文会演示同时使用密码和密钥的配置方式,因此,需要为部署用户 root 配置免密码 SSH 身份验证。

注意:本小节为可选配置项,如果你使用纯密码的方式作为服务器远程连接认证方式,可以忽略本节内容。

本文将 master-1 节点作为部署节点,下面的操作仅需在 master-1 节点操作。

以 root 用户登陆系统,然后使用 ssh-keygen 命令生成一个新的 SSH 密钥对,命令完成后,SSH 公钥和私钥将存储在 /root/.ssh 目录中。

ssh-keygen -t ed25519

命令执行效果如下:

[root@ks-master-1 ~]# ssh-keygen -t ed25519

Generating public/private ed25519 key pair.

Enter file in which to save the key (/root/.ssh/id_ed25519):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_ed25519.

Your public key has been saved in /root/.ssh/id_ed25519.pub.

The key fingerprint is:

SHA256:TCSHTDZ3PGHhfd0pkSHXy5W6jHJ+Lt60y9Pew3Bu6uU root@ks-master-1

The key's randomart image is:

+--[ED25519 256]--+

| o=.+.=+ ++ .|

| .o* +o.o..++|

| . ....+.=|

| o o.o |

| S o . |

| . o + . |

| + .*. |

| o+o+*.|

| ..=O*Eo|

+----[SHA256]-----+

接下来,输入以下命令将 SSH 公钥从 master-1 节点发送到其他节点。命令执行时输入 yes,以接受服务器的 SSH 指纹,然后在出现提示时输入 root 用户的密码。

ssh-copy-id root@192.168.9.61

ssh-copy-id root@192.168.9.62

ssh-copy-id root@192.168.9.63

ssh-copy-id root@192.168.9.65

ssh-copy-id root@192.168.9.66

ssh-copy-id root@192.168.9.67

注意:这里使用的 IP 地址的形式,并没有使用主机名。

下面是密钥复制时,正确的输出结果。

[root@ks-master-1 ~]# ssh-copy-id root@192.168.9.61

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_ed25519.pub"

The authenticity of host '192.168.9.61 (192.168.9.61)' can't be established.

ECDSA key fingerprint is SHA256:xsUsY99br/wAEbF9BvTsSNWXPRhrkTtYcOTABMXSuCU.

ECDSA key fingerprint is MD5:56:f3:5d:1a:10:fd:89:bf:6e:e4:fa:77:ac:48:51:0b.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@192.168.9.61's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'root@192.168.9.61'"

and check to make sure that only the key(s) you wanted were added.

添加并上传 SSH 公钥后,您现在可以执行下面的命令验证,通过 root 用户连接到所有服务器,无需密码验证。

[root@ks-master-1 ~]# ssh root@192.168.9.61

# 登陆输出结果 略

操作系统高级配置

此小节的操作内容为可选,但是建议执行。

本小节就一个主题,升级 CentOS 7.9 操作系统内核。

CentOS 7.9 的默认内核 N 宗罪(这么形容是不是有点过分?)。

CentOS 7.9 的默认内核版本为 3.10.0-1160.71.1,与其他操作系统内核版本动辄 5.x、6.x 相比,着实低了。

某些公司 3.x 的内核已经不符合安全规范要求,必须强制升级。

更重点的是新版本的 Kubernetes 及相关组件的某些功能,也是依赖于高版本内核的。具体有哪些、有多少我也不知道,曾经见过没记录。

xxx,应该还有很多需要升级的理由。就算没有也强制给自己找一个升级内核的必然理由吧。

综上所述,我们选择升级 CentOS 7.9 的内核。

升级方式选择广泛使用的 ELRepo 提供的软件源,直接在线安装新版本内核。

具体内核版本的选择上,建议选择长期支持版。长期支持版以 kernel-lt 命名,最新主线稳定版以 kernel-ml 命名。

接下来我们开始执行具体的升级内核的操作。

查看当前系统内核版本

# uname -r

3.10.0-1160.71.1.el7.x86_64

查询当前系统与 Kernel 相关的软件包

查询当前系统安装了哪些跟 Kernel 有关的软件包,升级内核的时候,一定要把已安装的相关 kernel 包一起升级。

# rpm -qa | grep kernel

kernel-3.10.0-1160.71.1.el7.x86_64

kernel-tools-3.10.0-1160.71.1.el7.x86_64

kernel-tools-libs-3.10.0-1160.71.1.el7.x86_64

增加 ELRepo 软件源

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

yum install https://www.elrepo.org/elrepo-release-7.el7.elrepo.noarch.rpm

查询可用的内核软件包

启用新增加的 ELRepo 软件仓库,查询可用的内核软件包。

yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

命令执行成功,结果如下:

[root@ks-master-1 ~]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* elrepo-kernel: hkg.mirror.rackspace.com

Available Packages

kernel-lt.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-devel.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-doc.noarch 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-headers.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-tools.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-tools-libs.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-lt-tools-libs-devel.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

kernel-ml.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-devel.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-doc.noarch 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-headers.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools-libs.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools-libs-devel.x86_64 6.4.7-1.el7.elrepo elrepo-kernel

perf.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

python-perf.x86_64 5.4.251-1.el7.elrepo elrepo-kernel

说明:当前最新 lt 版内核为 5.4.251-1,ml 版内核为 6.4.7-1。我们按规划选择安装 5.4.251-1 版本的内核。

安装新版本内核

这里我们只安装内核,同时安装其他包会报错,报错信息参考问题一。

yum --enablerepo=elrepo-kernel install kernel-lt

命令执行成功,结果如下。

[root@ks-master-1 ~]# yum -y --enablerepo=elrepo-kernel install kernel-lt

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* elrepo: hkg.mirror.rackspace.com

* elrepo-kernel: hkg.mirror.rackspace.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

Resolving Dependencies

--> Running transaction check

---> Package kernel-lt.x86_64 0:5.4.251-1.el7.elrepo will be installed

--> Finished Dependency Resolution

Dependencies Resolved

==========================================================================================================================================================================================

Package Arch Version Repository Size

==========================================================================================================================================================================================

Installing:

kernel-lt x86_64 5.4.251-1.el7.elrepo elrepo-kernel 50 M

Transaction Summary

==========================================================================================================================================================================================

Install 1 Package

Total download size: 50 M

Installed size: 230 M

Downloading packages:

kernel-lt-5.4.251-1.el7.elrepo.x86_64.rpm | 50 MB 00:00:01

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : kernel-lt-5.4.251-1.el7.elrepo.x86_64 1/1

Verifying : kernel-lt-5.4.251-1.el7.elrepo.x86_64 1/1

Installed:

kernel-lt.x86_64 0:5.4.251-1.el7.elrepo

Complete!

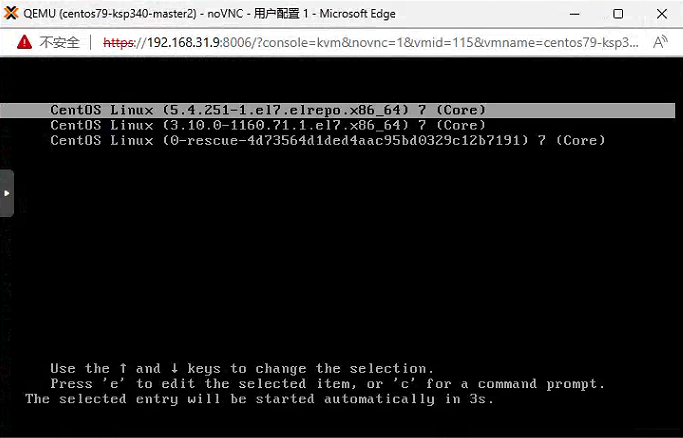

配置新内核引导系统

执行内核升级的命令,正确执行后,系统内核还是默认的版本,如果此时直接执行 reboot 命令,重启后使用的内核版本还是默认的 3.10,不会使用新的 5.4.251 的内核。

grubby --info=ALL | grep ^kernel

命令执行成功,结果如下

[root@ks-master-1 ~]# grubby --info=ALL | grep ^kernel

kernel=/boot/vmlinuz-5.4.251-1.el7.elrepo.x86_64

kernel=/boot/vmlinuz-3.10.0-1160.71.1.el7.x86_64

kernel=/boot/vmlinuz-0-rescue-4d73564d1ded4aac95bd0329c12b7191

grubby --default-kernel

命令执行成功,可以看到当前默认内核依旧为 3.10.0-1160.71.1

[root@ks-master-1 ~]# grubby --default-kernel

/boot/vmlinuz-3.10.0-1160.71.1.el7.x86_64

grubby --set-default "/boot/vmlinuz-5.4.251-1.el7.elrepo.x86_64"

通过命令查看,系统默认内核配置已经更新。

[root@ks-master-1 ~]# grubby --default-kernel

/boot/vmlinuz-5.4.251-1.el7.elrepo.x86_64

reboot

系统引导画面,新内核已经变成第一位引导选项。

重启系统并查看系统内核

[root@ks-master-1 ~]# uname -rs

Linux 5.4.251-1.el7.elrepo.x86_64

[root@ks-master-1 ~]# rpm -qa | grep kernel

kernel-lt-5.4.251-1.el7.elrepo.x86_64

kernel-3.10.0-1160.71.1.el7.x86_64

kernel-tools-3.10.0-1160.71.1.el7.x86_64

kernel-tools-libs-3.10.0-1160.71.1.el7.x86_64

在新内核引导系统后,安装其他 Kernel 相关软件包,解决之前出现的依赖冲突问题。

# 卸载旧版本的 kernel-tools 相关软件包

yum remove kernel-tools-3.10.0-1160.71.1.el7.x86_64 kernel-tools-libs-3.10.0-1160.71.1.el7.x86_64

# 安装新版本的 kernel-tools 相关软件包

yum --enablerepo=elrepo-kernel install kernel-lt-tools kernel-lt-tools-libs

注意:这一步操作仅适用于新安装的系统,已经运行其他应用的服务器请仔细评估、谨慎执行。

安装部署 KubeSphere 和 Kubernetes

注意:本文仅仅为了展示 KubeSphere v3.4.0 和 Kubernetes v1.27 安装组合是否顺利,KubeSphere 常用功能是否正常。因此,后续的操作仅适用于演示、学习、测试环境,不适用于生产环境。

下载 KubeKey

本文将 master-1 节点作为部署节点,把 KubeKey 最新版(v3.0.9)二进制文件下载到该服务器。具体 KubeKey 版本号可以在 KubeKey 发行页面查看。

cd ~

mkdir kubekey

cd kubekey/

# 选择中文区下载(访问 GitHub 受限时使用)

export KKZONE=cn

curl -sfL https://get-kk.kubesphere.io | sh -

# 也可以使用下面的命令指定具体版本

curl -sfL https://get-kk.kubesphere.io | VERSION=v3.0.9 sh -

# 正确的执行效果如下

[root@ks-master-1 ~]# cd ~

[root@ks-master-1 ~]# mkdir kubekey

[root@ks-master-1 ~]# cd kubekey/

[root@ks-master-1 kubekey]# export KKZONE=cn

[root@ks-master-1 kubekey]# curl -sfL https://get-kk.kubesphere.io | sh -

Downloading kubekey v3.0.9 from https://kubernetes.pek3b.qingstor.com/kubekey/releases/download/v3.0.9/kubekey-v3.0.9-linux-amd64.tar.gz ...

Kubekey v3.0.9 Download Complete!

[root@ks-master-1 kubekey]# ls -lh

total 110M

-rwxr-xr-x 1 root root 76M Jul 21 10:24 kk

-rw-r--r-- 1 root root 35M Jul 28 09:10 kubekey-v3.0.9-linux-amd64.tar.gz

- 查看 KubeKey 支持的 Kubernetes 版本列表

./kk version --show-supported-k8s

[root@ks-master-1 kubekey]# ./kk version --show-supported-k8s

v1.19.0

v1.19.8

v1.19.9

v1.19.15

v1.20.4

v1.20.6

v1.20.10

v1.21.0

v1.21.1

v1.21.2

v1.21.3

v1.21.4

v1.21.5

v1.21.6

v1.21.7

v1.21.8

v1.21.9

v1.21.10

v1.21.11

v1.21.12

v1.21.13

v1.21.14

v1.22.0

v1.22.1

v1.22.2

v1.22.3

v1.22.4

v1.22.5

v1.22.6

v1.22.7

v1.22.8

v1.22.9

v1.22.10

v1.22.11

v1.22.12

v1.22.13

v1.22.14

v1.22.15

v1.22.16

v1.22.17

v1.23.0

v1.23.1

v1.23.2

v1.23.3

v1.23.4

v1.23.5

v1.23.6

v1.23.7

v1.23.8

v1.23.9

v1.23.10

v1.23.11

v1.23.12

v1.23.13

v1.23.14

v1.23.15

v1.23.16

v1.23.17

v1.24.0

v1.24.1

v1.24.2

v1.24.3

v1.24.4

v1.24.5

v1.24.6

v1.24.7

v1.24.8

v1.24.9

v1.24.10

v1.24.11

v1.24.12

v1.24.13

v1.24.14

v1.25.0

v1.25.1

v1.25.2

v1.25.3

v1.25.4

v1.25.5

v1.25.6

v1.25.7

v1.25.8

v1.25.9

v1.25.10

v1.26.0

v1.26.1

v1.26.2

v1.26.3

v1.26.4

v1.26.5

v1.27.0

v1.27.1

v1.27.2

注意:输出结果为 KubeKey 支持的结果,但不代表 KubeSphere 和其他 Kubernetes 也能完美支持,此时我们选择最新的 v1.27.2,生产环境建议选择 v1.24 或是 v1.26。

创建 Kubernetes 和 KubeSphere 部署配置文件

创建集群配置文件,本示例中,选择 KubeSphere v3.4.0 和 Kubernetes v1.27.2,同时,指定配置文件名称为 kubesphere-v3.4.0.yaml,如果不指定,默认的文件名为 config-sample.yaml。

./kk create config -f kubesphere-v3.4.0.yaml --with-kubernetes v1.27.2 --with-kubesphere v3.4.0

命令执行成功后,在当前目录会生成文件名为 kubesphere-v3.4.0.yaml 的配置文件。

[root@ks-master-1 kubekey]# ./kk create config -f kubesphere-v3.4.0.yaml --with-kubernetes v1.27.2 --with-kubesphere v3.4.0

Generate KubeKey config file successfully

[root@ks-master-1 kubekey]# ls -lh

total 110M

-rwxr-xr-x 1 root root 76M Jul 21 10:24 kk

-rw-r--r-- 1 root root 35M Jul 28 09:10 kubekey-v3.0.9-linux-amd64.tar.gz

-rw-r--r-- 1 root root 5.2K Jul 28 09:11 kubesphere-v3.4.0.yaml

注意:生成的默认配置文件内容较多,这里就不做过多展示了,更多详细的配置参数请参考官方配置示例。

本文示例采用 3 个节点同时作为 control-plane 和 Etcd 节点,3 个节点作为 worker 节点。

编辑配置文件 kubesphere-v3.4.0.yaml,主要修改 kind: Cluster 和 kind: ClusterConfiguration 两小节的相关配置

修改 kind: Cluster 小节中 hosts 和 roleGroups 等信息,修改说明如下。

- hosts:指定节点的 IP、ssh 用户、ssh 密码、ssh 密钥、ssh 端口.示例演示了同时使用密码和密钥的配置方法,也演示了 ssh 端口号的配置方法。

- roleGroups:指定 3 个 etcd、control-plane 节点,指定 3 个 worker 节点,指定 ks-worker-1 作为 registry 节点。

- internalLoadbalancer: 启用内置的 HAProxy 负载均衡器。

- domain:自定义了一个 opsman.top。

- containerManager:使用了 containerd。

- registry:示例中增加了自动部署私有 Harbor 的配置,实际生产环境建议自己手工部署。请注意,默认的创建集群的命令并不会自动部署 Harbor,需要单独执行。

修改后的示例如下:

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: sample

spec:

hosts:

- {name: ks-master-1, address: 192.168.9.61, internalAddress: 192.168.9.61, port: 22, user: root, password: "P@88w0rd"}

- {name: ks-master-2, address: 192.168.9.62, internalAddress: 192.168.9.62, user: root, privateKeyPath: "~/.ssh/id_ed25519"}

- {name: ks-master-3, address: 192.168.9.63, internalAddress: 192.168.9.63, user: root, privateKeyPath: "~/.ssh/id_ed25519"}

- {name: ks-worker-1, address: 192.168.9.65, internalAddress: 192.168.9.65, user: root, privateKeyPath: "~/.ssh/id_ed25519"}

- {name: ks-worker-2, address: 192.168.9.66, internalAddress: 192.168.9.66, user: root, privateKeyPath: "~/.ssh/id_ed25519"}

- {name: ks-worker-3, address: 192.168.9.67, internalAddress: 192.168.9.67, user: root, privateKeyPath: "~/.ssh/id_ed25519"}

roleGroups:

etcd:

- ks-master-1

- ks-master-2

- ks-master-3

control-plane:

- ks-master-1

- ks-master-2

- ks-master-3

worker:

- ks-worker-1

- ks-worker-2

- ks-worker-3

registry:

- ks-worker-1

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

internalLoadbalancer: haproxy

domain: lb.opsman.top

address: ""

port: 6443

kubernetes:

version: v1.27.2

clusterName: opsman.top

autoRenewCerts: true

containerManager: containerd

etcd:

type: kubekey

network:

plugin: calico

kubePodsCIDR: 10.233.64.0/18

kubeServiceCIDR: 10.233.0.0/18

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

registry:

type: "harbor"

privateRegistry: ""

namespaceOverride: ""

registryMirrors: []

insecureRegistries: []

addons: []

修改 kind: ClusterConfiguration 启用可插拔组件,修改说明如下。

etcd:

monitoring: true # 将 "false" 更改为 "true"

endpointIps: localhost

port: 2379

tlsEnable: true

openpitrix:

store:

enabled: true # 将 "false" 更改为 "true"

devops:

enabled: true # 将 "false" 更改为 "true"

logging:

enabled: true # 将 "false" 更改为 "true"

containerruntime: containerd

events:

enabled: true # 将 "false" 更改为 "true"

注意:默认情况下,如果启用了事件系统功能,KubeKey 将安装内置 Elasticsearch。对于生产环境,不建议在部署集群时启用事件系统。请在部署完成后,参考可插拔组件官方文档手工配置。

alerting:

enabled: true # 将 "false" 更改为 "true"

auditing:

enabled: true # 将 "false" 更改为 "true"

servicemesh:

enabled: true # 将 "false" 更改为 "true"

istio: # Customizing the istio installation configuration, refer to https://istio.io/latest/docs/setup/additional-setup/customize-installation/

components:

ingressGateways:

- name: istio-ingressgateway # 将服务暴露至服务网格之外。默认不开启。

enabled: false

cni:

enabled: false # 启用后,会在 Kubernetes pod 生命周期的网络设置阶段完成 Istio 网格的 pod 流量转发设置工作。

metrics_server:

enabled: true # 将 "false" 更改为 "true"

说明:KubeSphere 支持用于部署的容器组(Pod)弹性伸缩程序 (HPA)。在 KubeSphere 中,Metrics Server 控制着 HPA 是否启用。

- 启用网络策略、容器组 IP 池、服务拓扑图(名字排序,对应配置参数排序)

network:

networkpolicy:

enabled: true # 将 "false" 更改为 "true"

ippool:

type: calico # 将 "none" 更改为 "calico"

topology:

type: weave-scope # 将 "none" 更改为 "weave-scope"

说明:

- 从 3.0.0 版本开始,用户可以在 KubeSphere 中配置原生 Kubernetes 的网络策略。

- 容器组 IP 池用于规划容器组网络地址空间,每个容器组 IP 池之间的地址空间不能重叠。

- 启用服务拓扑图以集成 Weave Scope (Docker 和 Kubernetes 的可视化和监控工具),服务拓扑图显示在您的项目中,将服务之间的连接关系可视化。

部署 KubeSphere 和 Kubernetes

接下来我们执行下面的命令,使用上面生成的配置文件部署 KubeSphere 和 Kubernetes。

export KKZONE=cn

./kk create cluster -f kubesphere-v3.4.0.yaml

上面的命令执行后,首先 KubeKey 会检查部署 Kubernetes 的依赖及其他详细要求。检查合格后,系统将提示您确认安装。输入 yes 并按 ENTER 继续部署。

[root@ks-master-1 kubekey]# export KKZONE=cn

[root@ks-master-1 kubekey]# ./kk create cluster -f kubesphere-v3.4.0.yaml

_ __ _ _ __

| | / / | | | | / /

| |/ / _ _| |__ ___| |/ / ___ _ _

| \| | | | '_ \ / _ \ \ / _ \ | | |

| |\ \ |_| | |_) | __/ |\ \ __/ |_| |

\_| \_/\__,_|_.__/ \___\_| \_/\___|\__, |

__/ |

|___/

11:22:22 CST [GreetingsModule] Greetings

11:22:23 CST message: [ks-master-3]

Greetings, KubeKey!

11:22:23 CST message: [ks-worker-3]

Greetings, KubeKey!

11:22:23 CST message: [ks-worker-1]

Greetings, KubeKey!

11:22:23 CST message: [ks-worker-2]

Greetings, KubeKey!

11:22:23 CST message: [ks-master-1]

Greetings, KubeKey!

11:22:23 CST message: [ks-master-2]

Greetings, KubeKey!

11:22:23 CST success: [ks-master-3]

11:22:23 CST success: [ks-worker-3]

11:22:23 CST success: [ks-worker-1]

11:22:23 CST success: [ks-worker-2]

11:22:23 CST success: [ks-master-1]

11:22:23 CST success: [ks-master-2]

11:22:23 CST [NodePreCheckModule] A pre-check on nodes

11:22:23 CST success: [ks-worker-2]

11:22:23 CST success: [ks-master-2]

11:22:23 CST success: [ks-master-1]

11:22:23 CST success: [ks-master-3]

11:22:23 CST success: [ks-worker-1]

11:22:23 CST success: [ks-worker-3]

11:22:23 CST [ConfirmModule] Display confirmation form

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+------------+------------+-------------+------------------+--------------+

| name | sudo | curl | openssl | ebtables | socat | ipset | ipvsadm | conntrack | chrony | docker | containerd | nfs client | ceph client | glusterfs client | time |

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+------------+------------+-------------+------------------+--------------+

| ks-worker-1 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

| ks-worker-2 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

| ks-worker-3 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

| ks-master-1 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

| ks-master-2 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

| ks-master-3 | y | y | y | y | y | y | y | y | y | | | | | | CST 11:22:23 |

+-------------+------+------+---------+----------+-------+-------+---------+-----------+--------+--------+------------+------------+-------------+------------------+--------------+

This is a simple check of your environment.

Before installation, ensure that your machines meet all requirements specified at

https://github.com/kubesphere/kubekey#requirements-and-recommendations

Continue this installation? [yes/no]:

注意:检查结果中我们可以看到 nfs client、ceph client、glusterfs client 3 个与存储有关的 client 显示没有安装,这个问题文本可以忽略。

安装过程日志输出比较多,为了节省篇幅这里就不展示了。

部署完成需要大约 10-30 分钟左右,具体看网速、机器配置、启用多少插件等,本次部署完成耗时 30 分钟。

部署完成后,您应该会在终端上看到类似于下面的输出。提示部署完成的同时,输出中还会显示用户登陆 KubeSphere 的默认管理员用户和密码。

11:35:18 CST [DeployKubeSphereModule] Apply ks-installer

11:35:19 CST stdout: [ks-master-1]

namespace/kubesphere-system unchanged

serviceaccount/ks-installer unchanged

customresourcedefinition.apiextensions.k8s.io/clusterconfigurations.installer.kubesphere.io unchanged

clusterrole.rbac.authorization.k8s.io/ks-installer unchanged

clusterrolebinding.rbac.authorization.k8s.io/ks-installer unchanged

deployment.apps/ks-installer unchanged

clusterconfiguration.installer.kubesphere.io/ks-installer created

11:35:19 CST skipped: [ks-master-3]

11:35:19 CST skipped: [ks-master-2]

11:35:19 CST success: [ks-master-1]

#####################################################

### Welcome to KubeSphere! ###

#####################################################

Console: http://192.168.9.61:30880

Account: admin

Password: P@88w0rd

NOTES:

1. After you log into the console, please check the

monitoring status of service components in

"Cluster Management". If any service is not

ready, please wait patiently until all components

are up and running.

2. Please change the default password after login.

#####################################################

https://kubesphere.io 2023-07-28 11:52:37

#####################################################

11:52:42 CST skipped: [ks-master-3]

11:52:42 CST skipped: [ks-master-2]

11:52:42 CST success: [ks-master-1]

11:52:42 CST Pipeline[CreateClusterPipeline] execute successfully

Installation is complete.

Please check the result using the command:

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l 'app in (ks-install, ks-installer)' -o jsonpath='{.items[0].metadata.name}') -f

特殊说明

上面三节内容为了保持文档内容的连贯性,我一直没有更改 Kubernetes 的版本号,一直使用的 v1.27.2,实际上在执行 ./kk create cluster -f kubesphere-v3.4.0.yaml 的过程中出现了异常,导致部署任务失败,尝试了 2 次,均为一样的报错,最后无奈之下,换成了 v1.26.5,再次执行部署任务,一把成功,真的是一把成功!!!

具体报错信息,查看 问题 3。

部署 Harbor

集群部署配置文件中加入了 Harbor 的配置,但是集群创建命令并不会自动安装部署 Harbor,需要单独执行相关命令。具体参考官网离线部署文档。

强烈建议,生产环境或是重要环境,不要复用已有 Kubernetes 集群节点安装 Harbor,一定要找一台单独的机器。

执行以下命令安装镜像仓库 Harbor(整个安装包 629M)。

./kk init registry -f kubesphere-v3.4.0.yaml

命令执行成功,结果如下:

[root@ks-master-1 kubekey]# ./kk init registry -f kubesphere-v3.4.0.yaml

_ __ _ _ __

| | / / | | | | / /

| |/ / _ _| |__ ___| |/ / ___ _ _

| \| | | | '_ \ / _ \ \ / _ \ | | |

| |\ \ |_| | |_) | __/ |\ \ __/ |_| |

\_| \_/\__,_|_.__/ \___\_| \_/\___|\__, |

__/ |

|___/

12:41:30 CST [GreetingsModule] Greetings

12:41:30 CST message: [ks-master-3]

Greetings, KubeKey!

12:41:30 CST message: [ks-worker-1]

Greetings, KubeKey!

12:41:31 CST message: [ks-worker-3]

Greetings, KubeKey!

12:41:31 CST message: [ks-master-1]

Greetings, KubeKey!

12:41:31 CST message: [ks-master-2]

Greetings, KubeKey!

12:41:31 CST message: [ks-worker-2]

Greetings, KubeKey!

12:41:31 CST success: [ks-master-3]

12:41:31 CST success: [ks-worker-1]

12:41:31 CST success: [ks-worker-3]

12:41:31 CST success: [ks-master-1]

12:41:31 CST success: [ks-master-2]

12:41:31 CST success: [ks-worker-2]

12:41:31 CST [RegistryPackageModule] Download registry package

12:41:31 CST message: [localhost]

downloading amd64 harbor v2.5.3 ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 629M 100 629M 0 0 1020k 0 0:10:31 0:10:31 --:--:-- 1200k

12:51:40 CST message: [localhost]

downloading amd64 docker 20.10.8 ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 58.1M 100 58.1M 0 0 18.1M 0 0:00:03 0:00:03 --:--:-- 18.1M

12:51:44 CST message: [localhost]

downloading amd64 compose v2.2.2 ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 23.5M 100 23.5M 0 0 1007k 0 0:00:23 0:00:23 --:--:-- 1067k

12:52:08 CST success: [LocalHost]

注意:Harbor 部署节点会自动安装 Docker。

但是,部署到最后还是失败了,失败报错信息如下:

12:42:11 CST [InitRegistryModule] Fetch registry certs

12:42:11 CST success: [ks-worker-1]

12:42:11 CST [InitRegistryModule] Generate registry Certs

[certs] Generating "ca" certificate and key

12:42:12 CST message: [LocalHost]

unable to sign certificate: must specify a CommonName

12:42:12 CST failed: [LocalHost]

error: Pipeline[InitRegistryPipeline] execute failed: Module[InitRegistryModule] exec failed:

failed: [LocalHost] [GenerateRegistryCerts] exec failed after 1 retries: unable to sign certificate: must specify a CommonName

这个报错就当尾巴留下吧,官方参考文档对这方面的内容介绍是少得可怜,也有可能是我自己没找到,懒得去解决了,反正实际环境中我都是自己独立部署,有需要的读者自行排除解决吧。

假如正常部署完成,部署的默认信息如下:

- Harobr 版本:v2.5.3

- Harbor 管理员账号:admin

- Harbor 管理员默认密码:Harbor12345

- Harbor 安装文件目录: Harbor 节点本地 /opt/harbor 目录。

部署验证

接下来我们验证的是 KubeKey v3.0.9 部署的 KubeSphere v3.4.0 和 Kubernetes v1.26.5 的环境。

KubeSphere 管理控制台验证集群状态

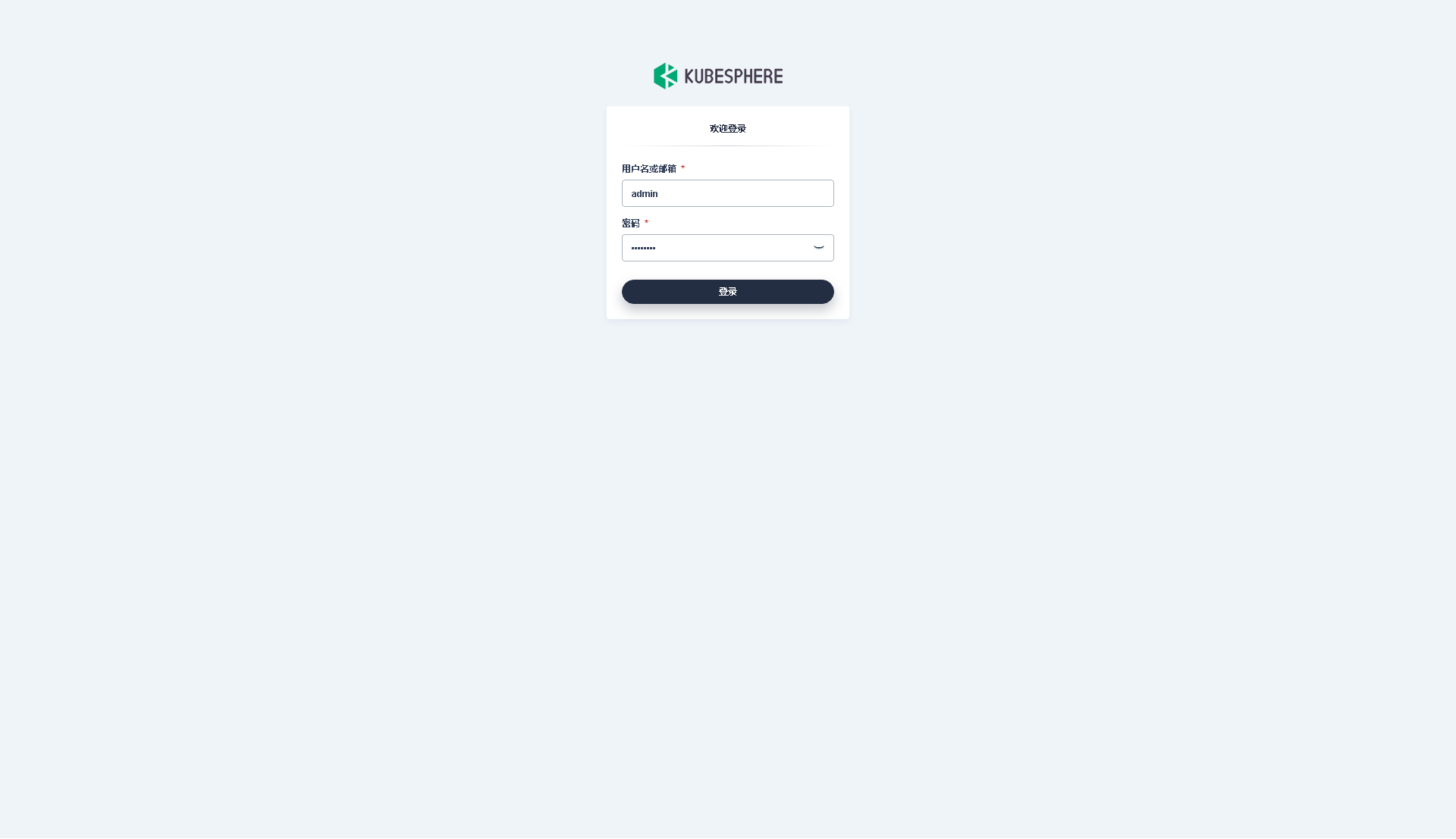

我们打开浏览器访问 master-1 节点的 IP 地址和端口 30880,可以看到熟悉的 KubeSphere 管理控制台的登录页面。

输入默认用户 admin 和默认密码 P@88w0rd,然后点击「登录」。

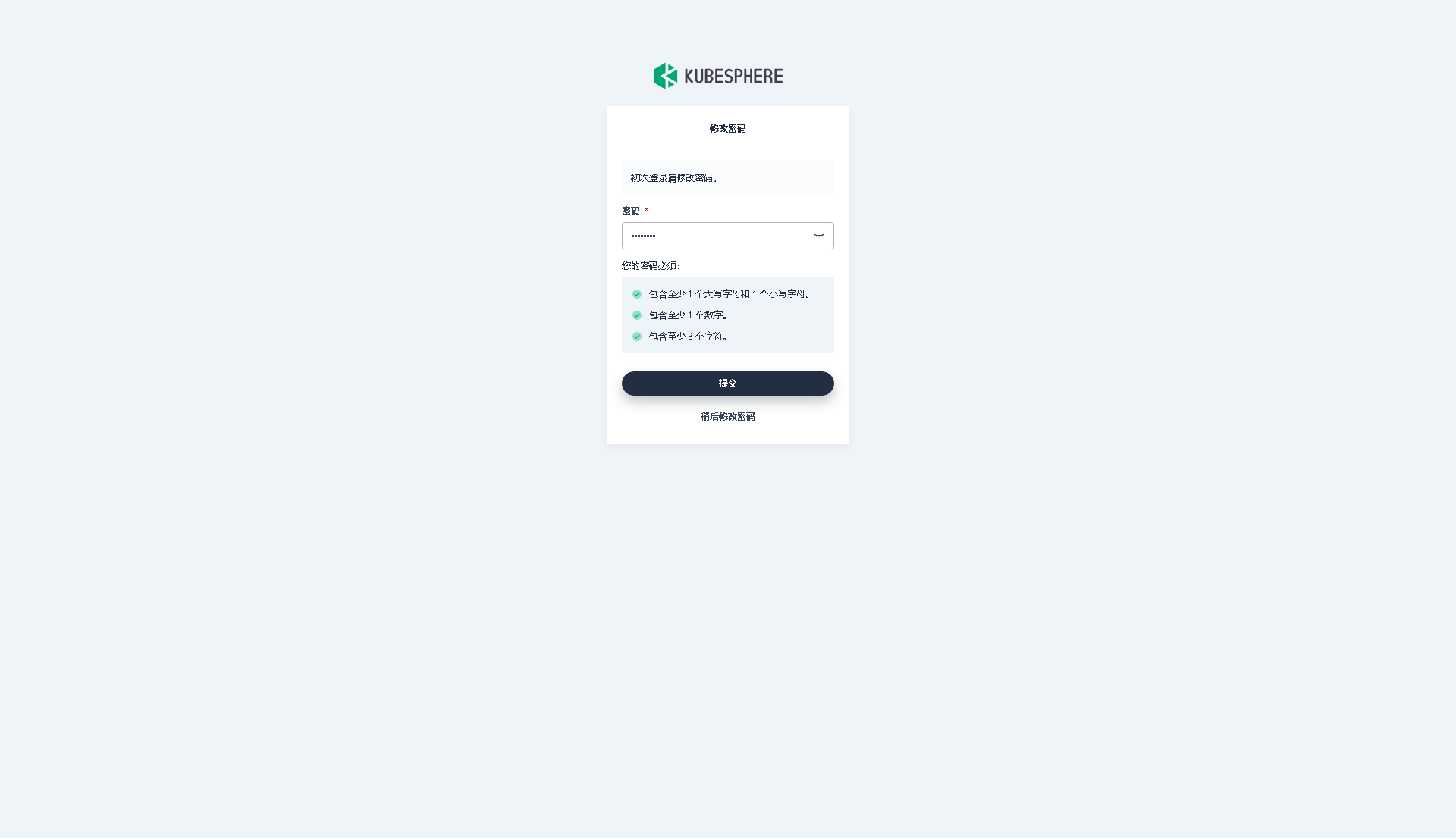

登录后,系统会要求您更改 KubeSphere 默认用户 admin 的默认密码,输入新的密码并点击「提交」。

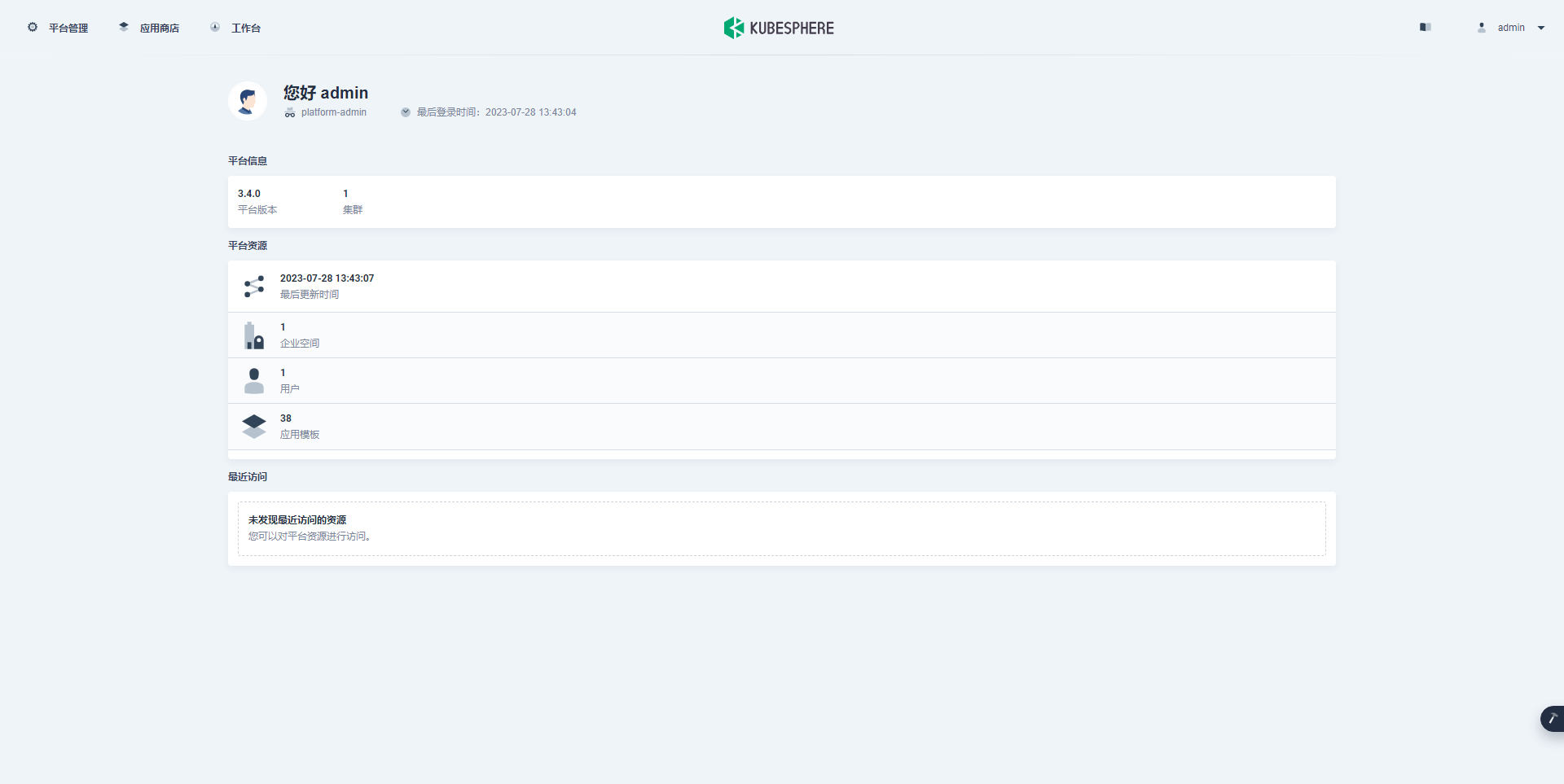

提交完成后,系统会跳转到 KubeSphere admin 用户工作台页面,该页面显示了当前 KubeSphere 版本为 v3.4.0,可用的 Kubernetes 集群数量为 1。

接下来,单击左上角的「平台管理」菜单,选择「集群管理」。

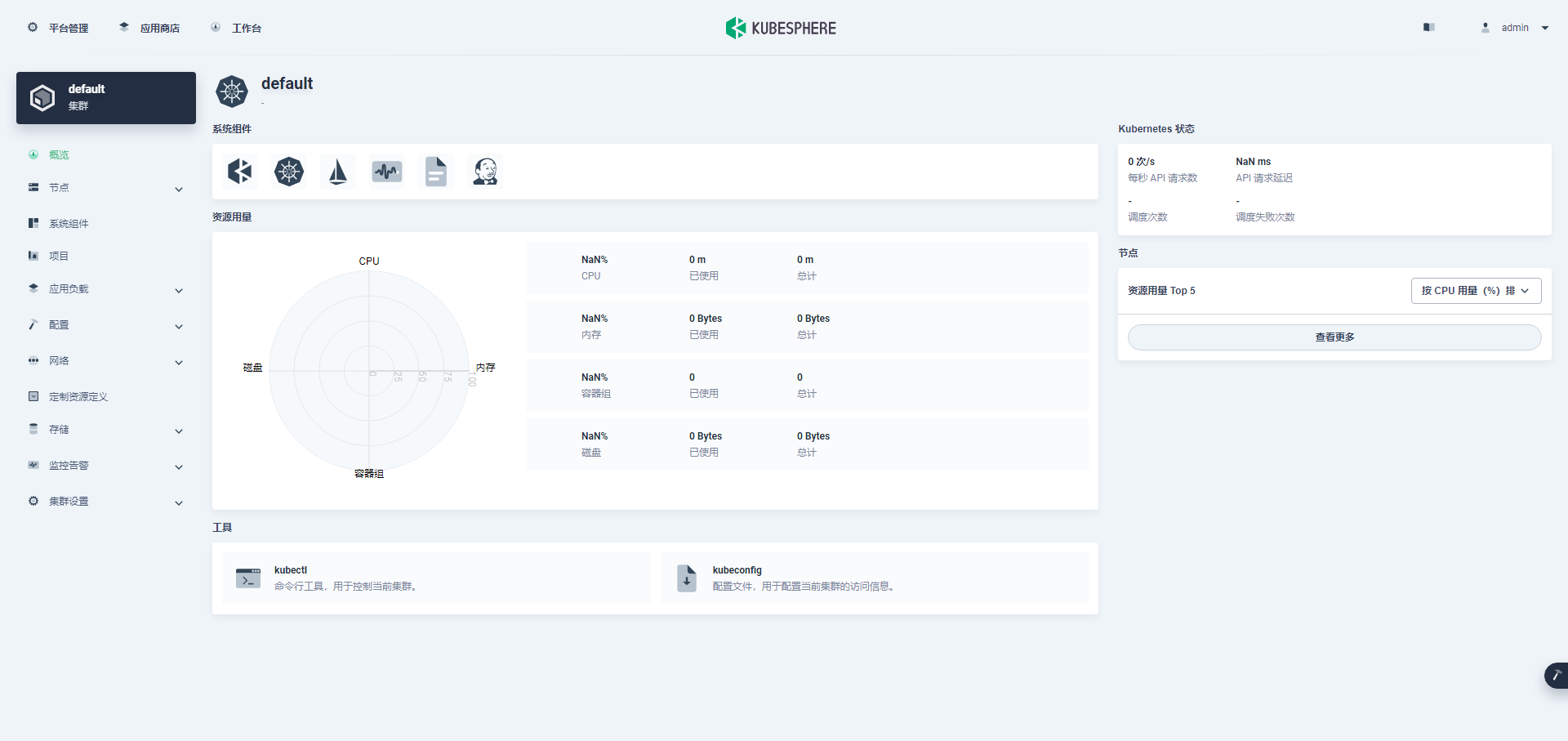

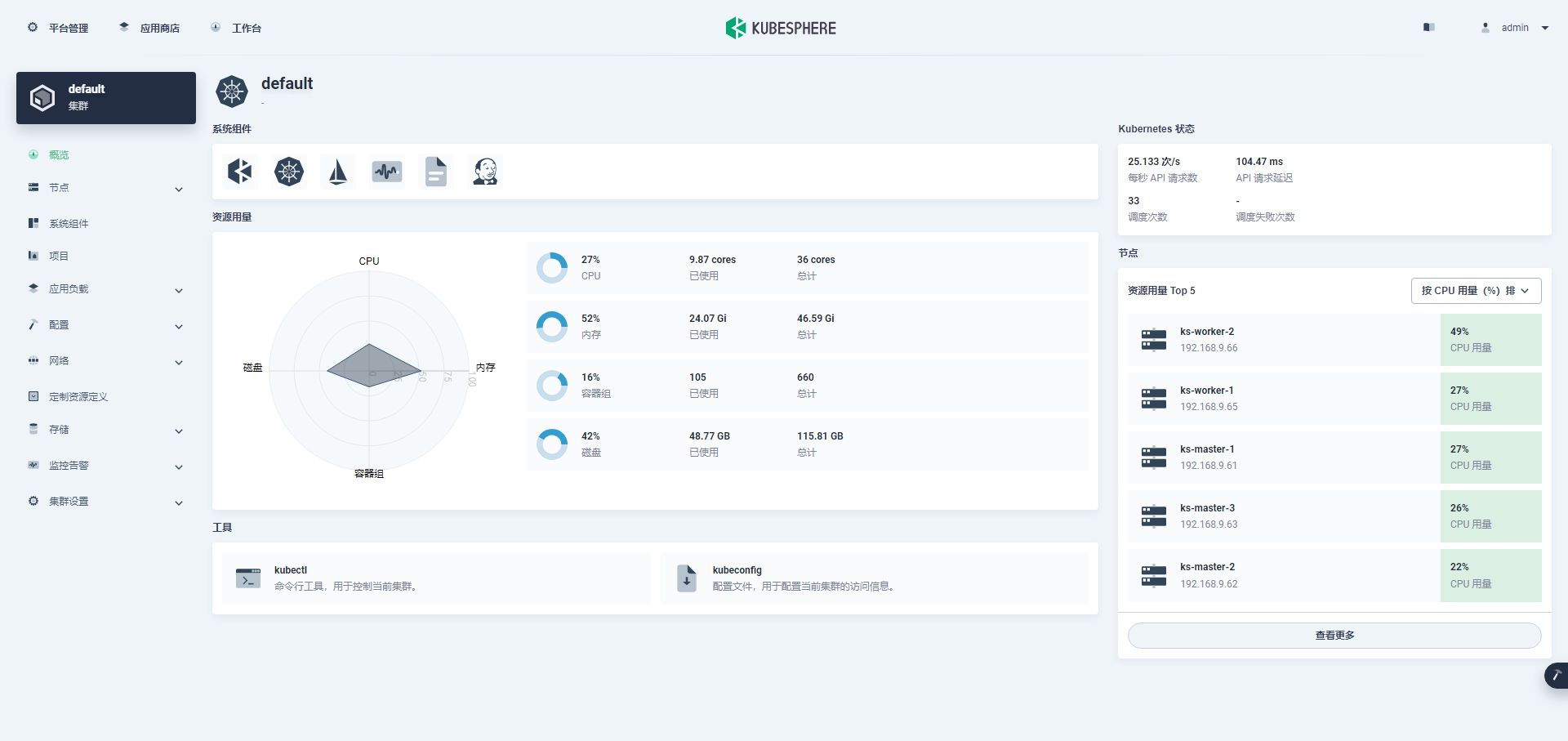

进入集群管理界面,在该页面可以查看集群的基本信息,包括集群资源用量、Kubernetes 状态、节点资源用量 Top、系统组件、工具箱等内容。

说明:第一次打开,没有监控数据,主要是因为监控组件启动失败 ,后面会有正常的效果图。

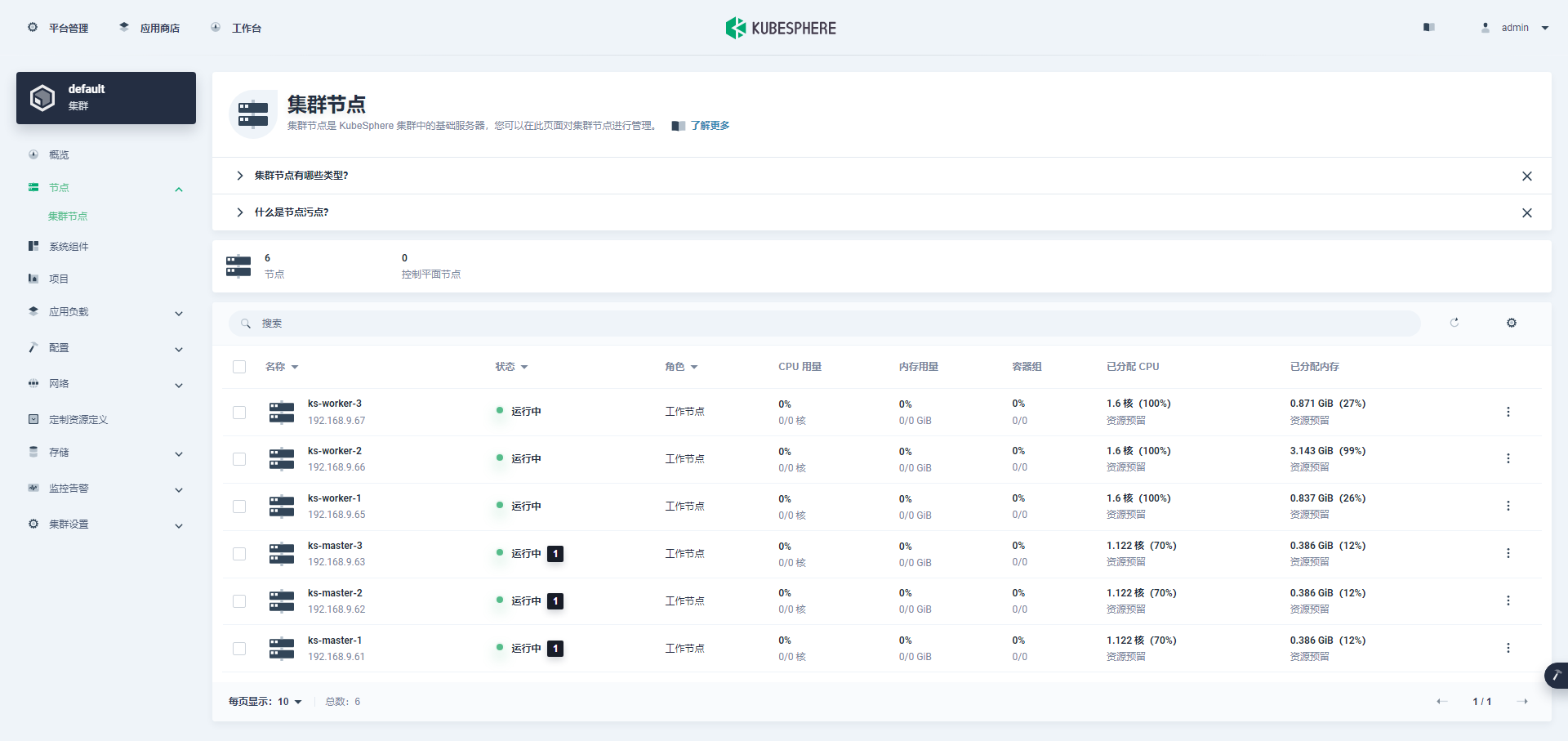

单击左侧「节点」菜单,点击「集群节点」可以查看 Kubernetes 集群可用节点的详细信息。

说明:第一次打开,没有监控数据,主要是因为监控组件启动失败 ,后面会有正常的效果图。

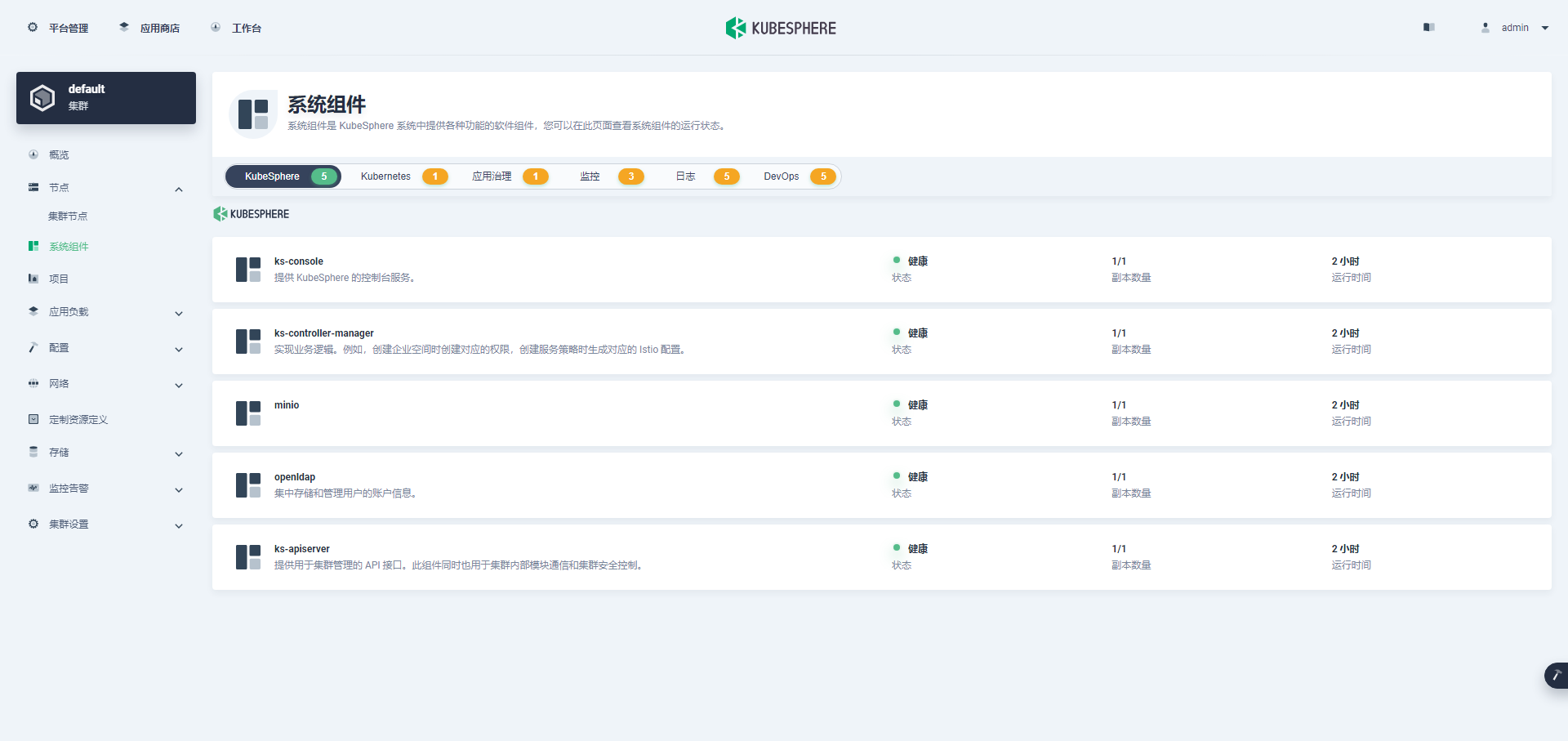

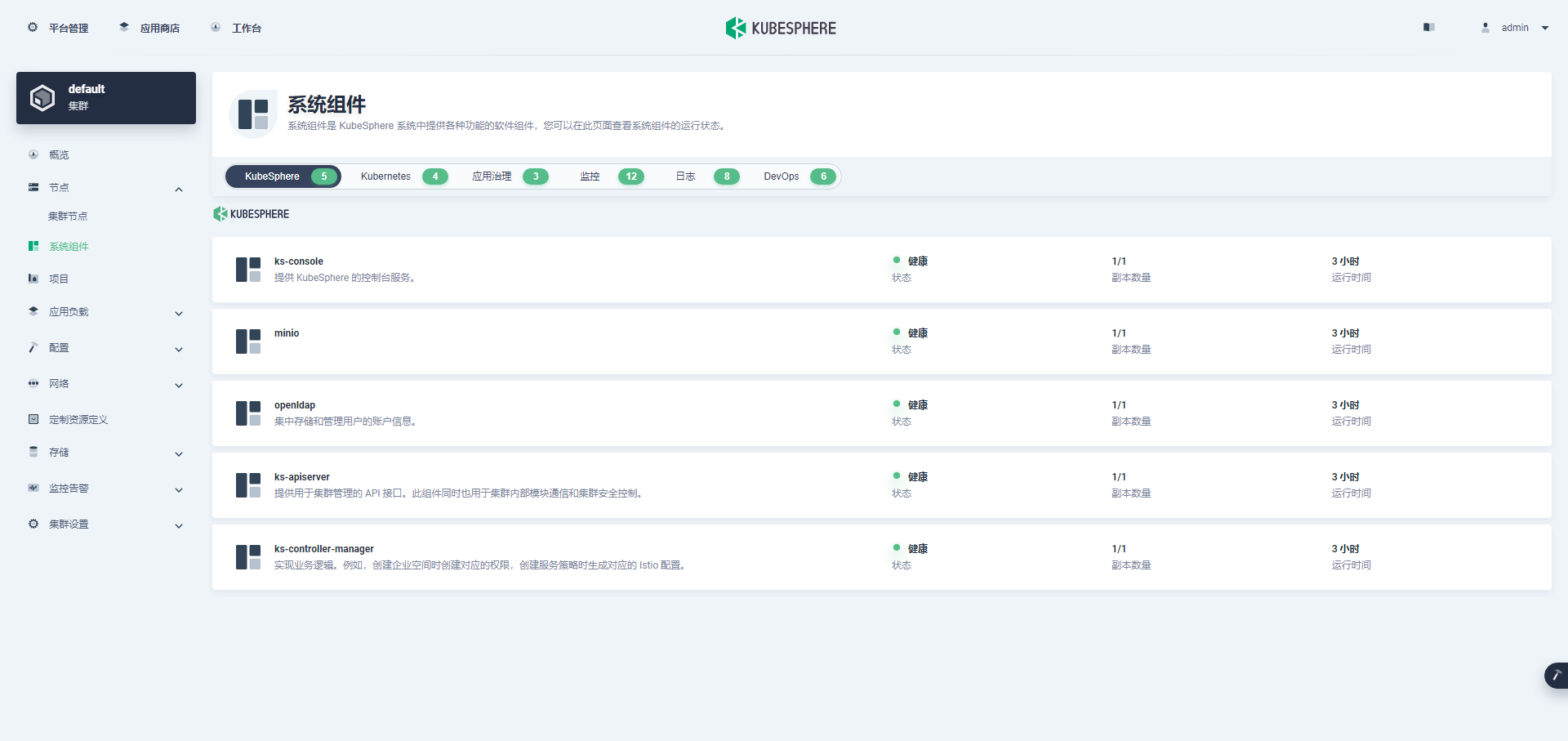

单击左侧「系统组件」菜单,可以查看已安装组件的详细信息。

说明:这组件状态一片黄,我先认为是我演示用虚拟机资源不够造成的吧,我把机器 CPU 从 2C 变成 4C,内存从 4G 变成 8G,然后重启服务器。没办法虚拟机不支持热更新。

正好也可以体验一把,机器重启后,Kubernetes 和 KubeSphere 是否能自动恢复。

第一次重启后,Kubernetes、应用治理等组件状态正常,其他依旧异常(过程没截图),再次升级,将 Worker 节点 CPU 从 4C 变成 8C,Master 节点还是 4C 8G。

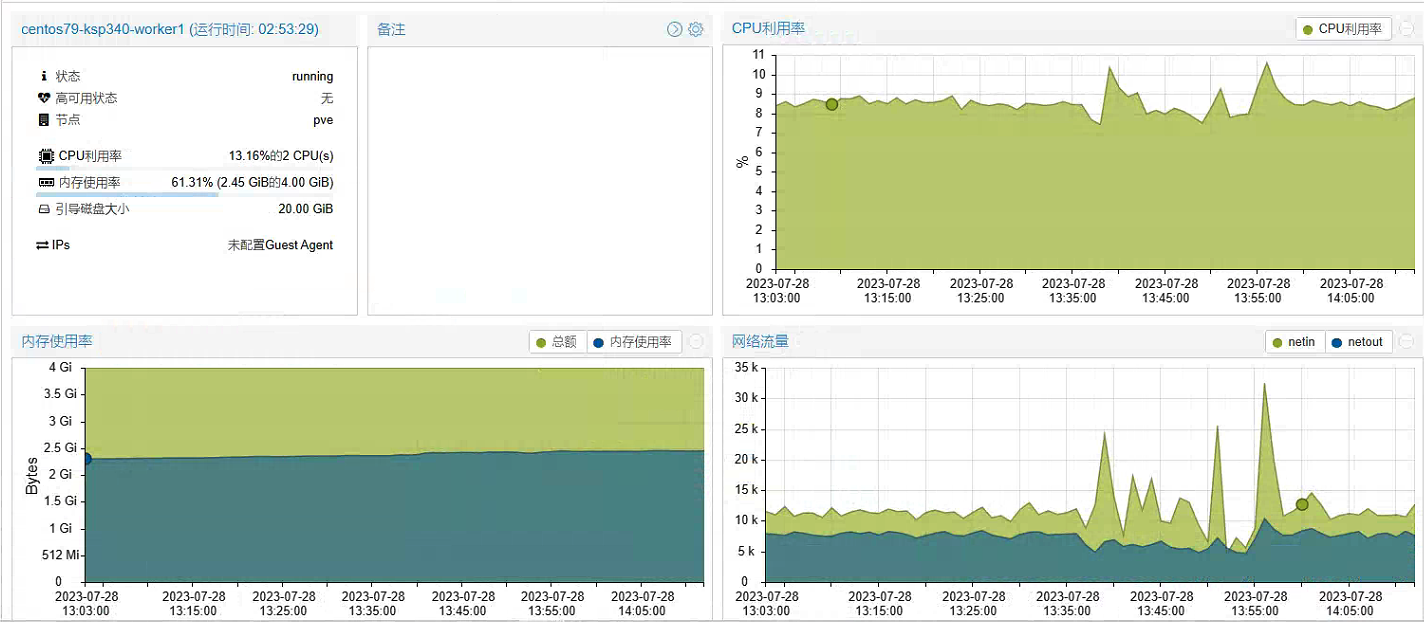

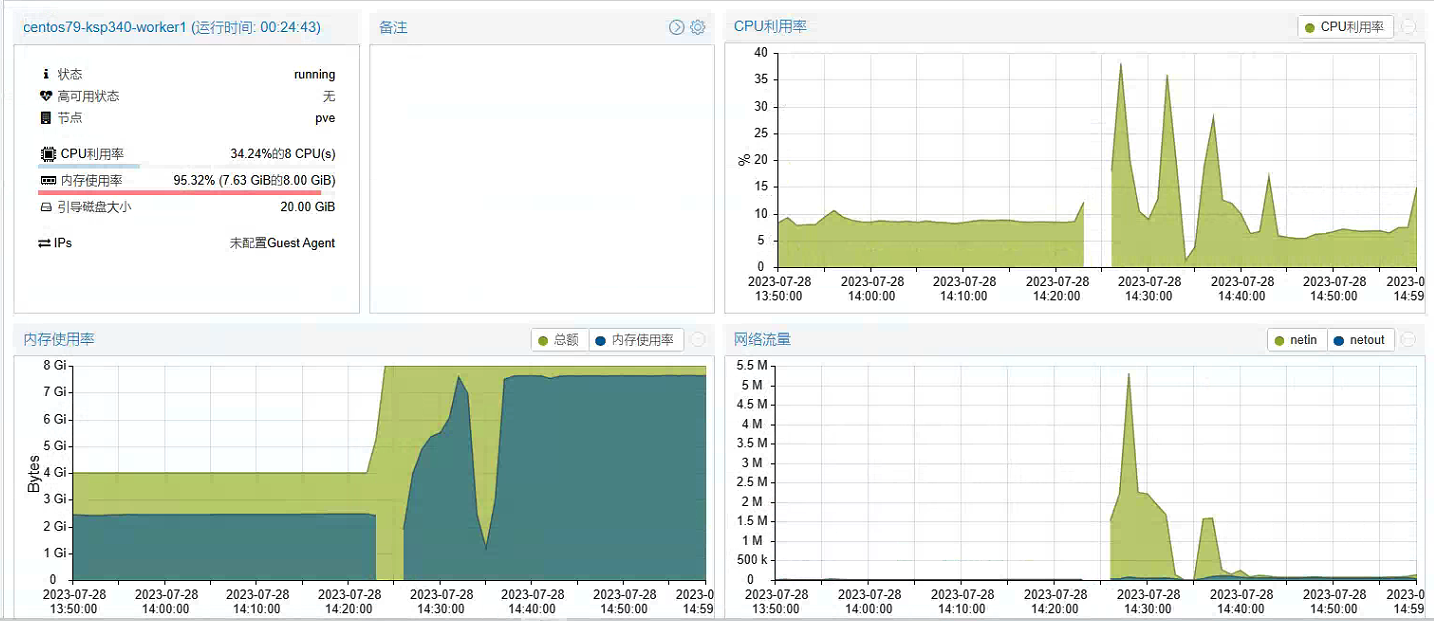

先看一看第一次配置升级前,Worker 节点服务器资源使用率(2C 4G)的监控数据。

看到上面的虚拟化上的监控图,是不是觉得有些疑惑,这资源部署还有一半么,为啥你说不够了要升级?因为,资源不足以创建系统组件,K8s 集群上大部分组件都创建失败了,当然也就没占用资源了。

废话不多说,直接安排升级吧!!!

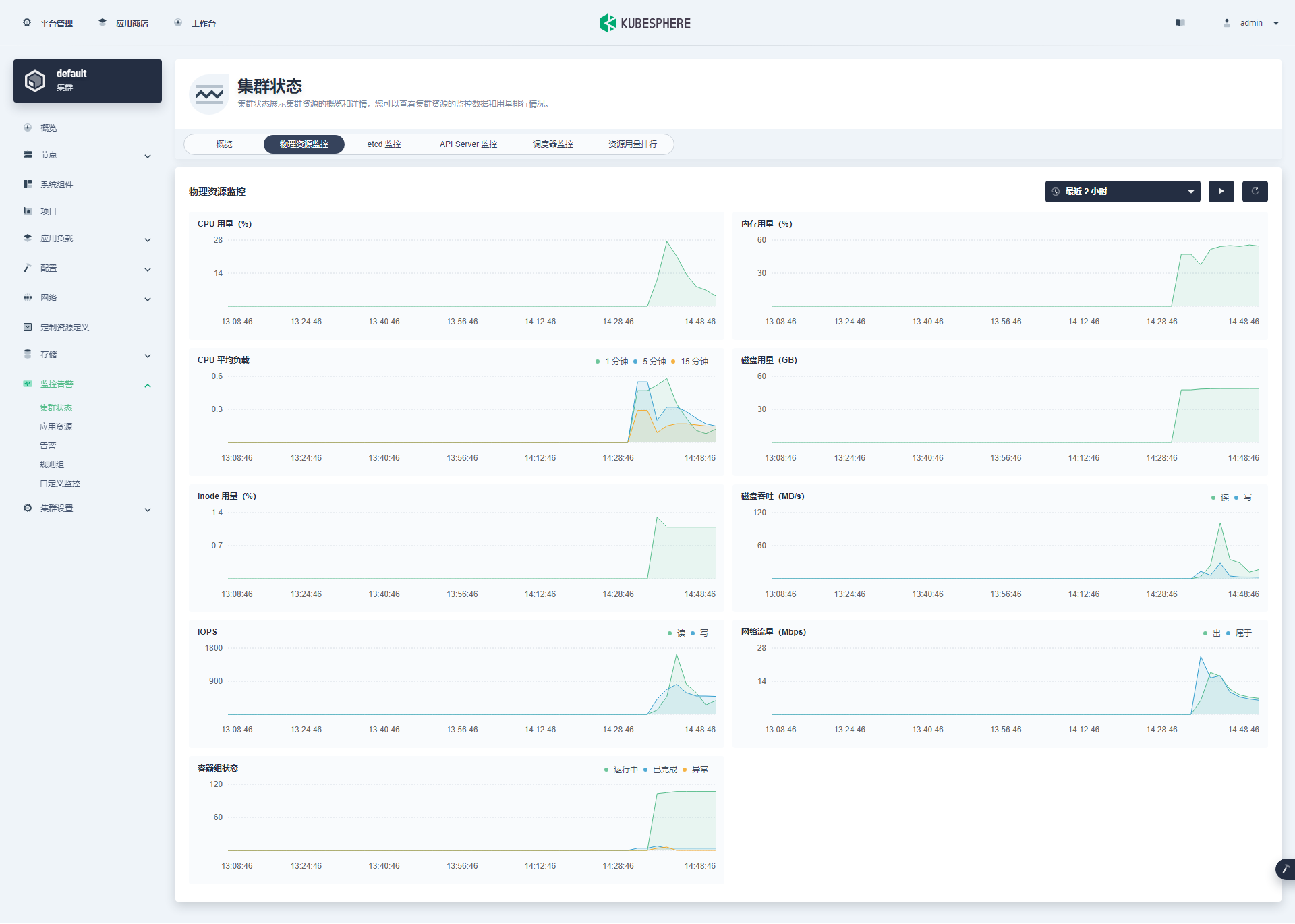

Worker 节点配置升级后(8C 8GB ),机器重启,集群自动恢复正常状态,我们看一组正常状态的集群数据截图。

说明:目前看来 v3.4.0 修复了之前存在的 Kubernetes v1.24 以上版本,监控没数据的问题。

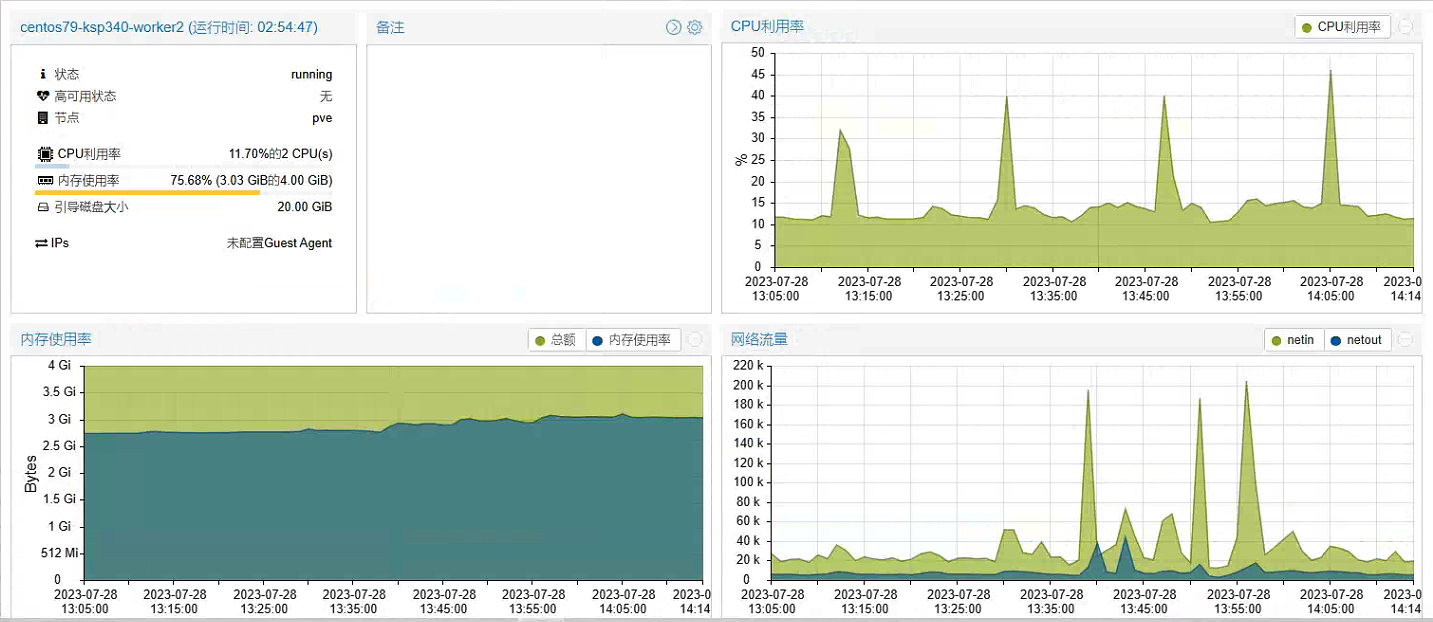

虽然在 Worker 节点 8C 8G 的配置下,所有系统组件都启动成功了。但是,还没看完所有组件的效果,平台又出现了卡顿,机器配置又一次出现了瓶颈,第三次进行了机器升级,将 3 个 Worker 节点的内存,升级到了 16GB。

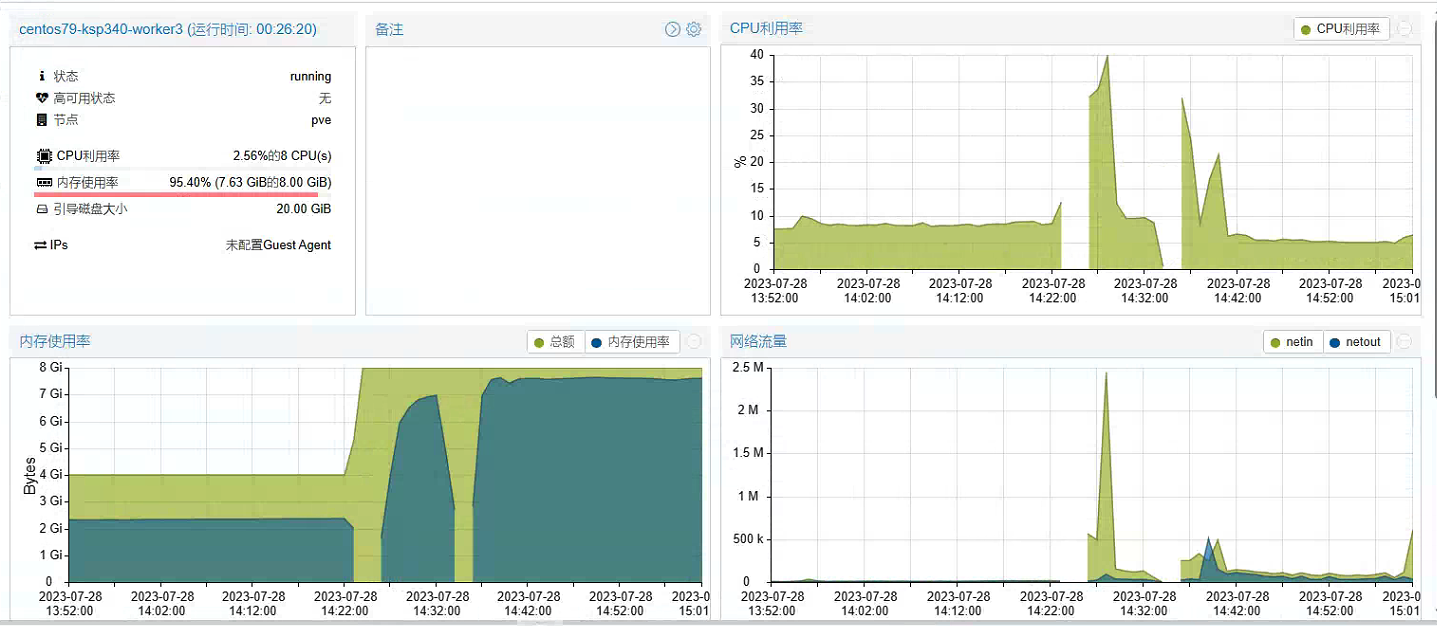

第三次升级前 Worker 节点服务器资源使用率(8C 8G)。

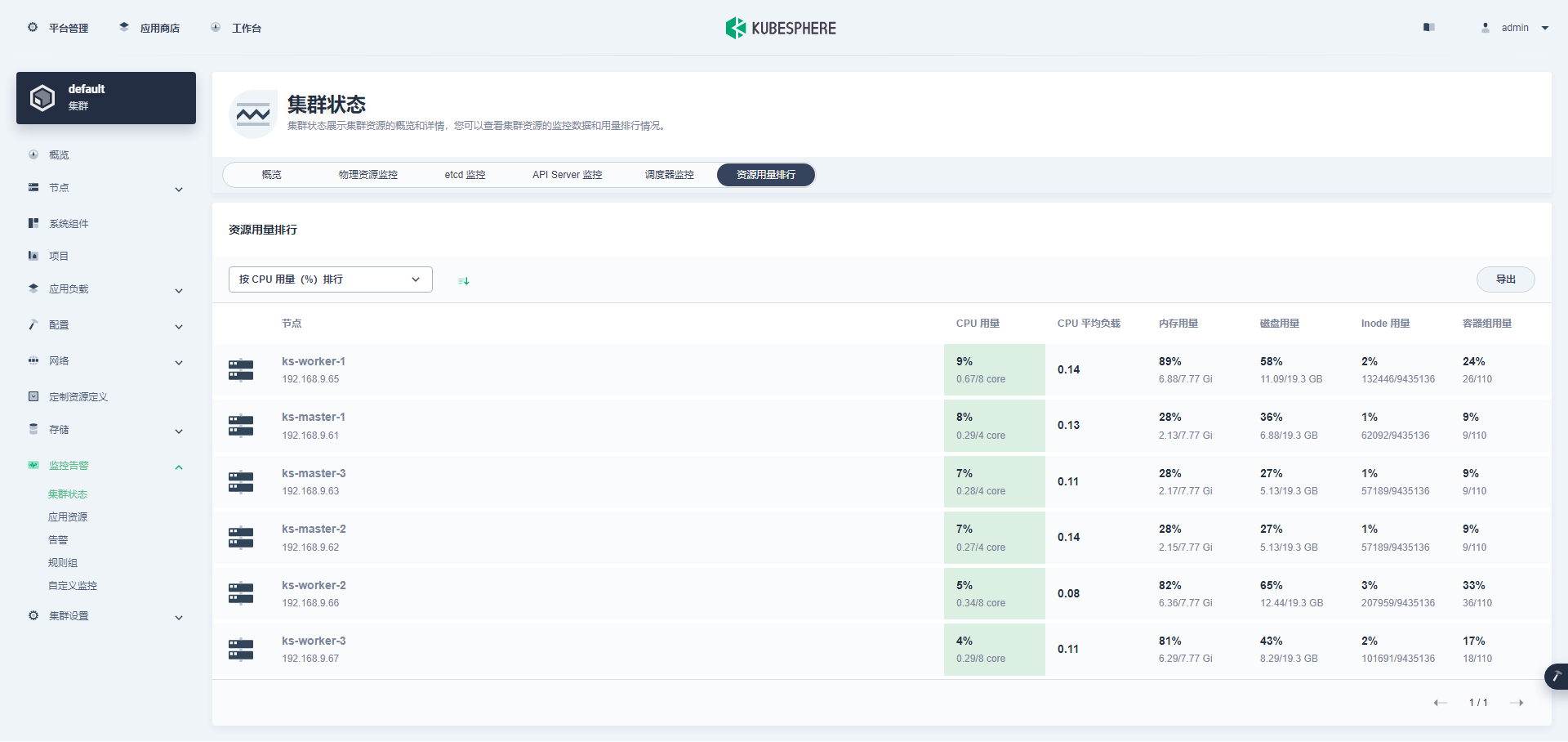

第三次升级后,所有组件都正常运转后,Worker 节点服务器资源资源使用率(8C 16G)。

所有组件都正常运转后,Master 节点服务器资源资源使用率(4C 8G)。

KubeSphere 管理控制台里看到的节点信息。

所以,这里要强调一下,真要是体验 KubeSphere 完整的功能,所有节点建议 Master 节点 4C 8G,Worker 节点 8C 16G,否则真跑不起来。但是,注意啊,跑起来以后 CPU 资源消耗又没那么高。

接下来我们粗略的看一下我们部署集群时启用的可插拔插件的状态。

- KubeSphere DevOps 系统(组件创建了,但是没验证功能)

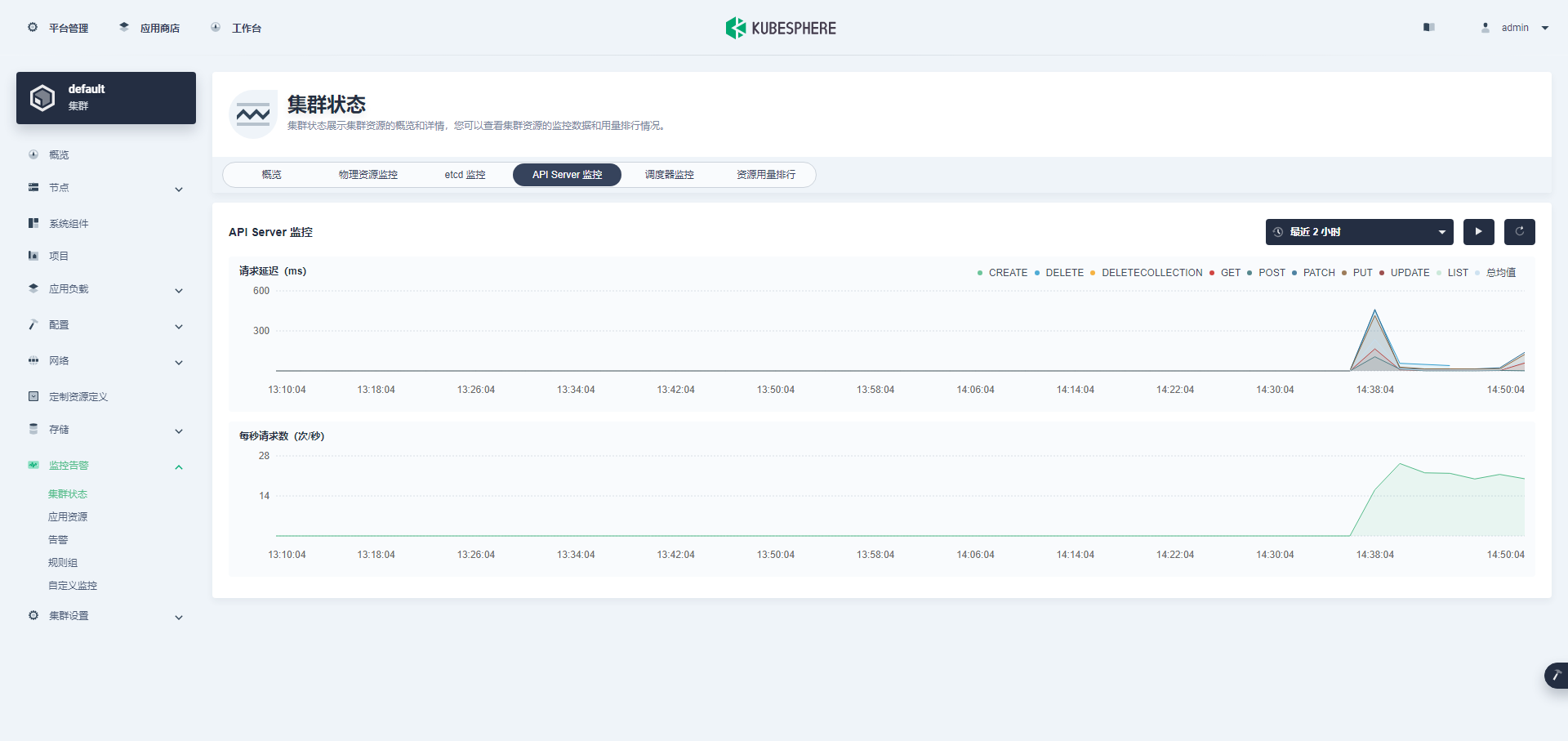

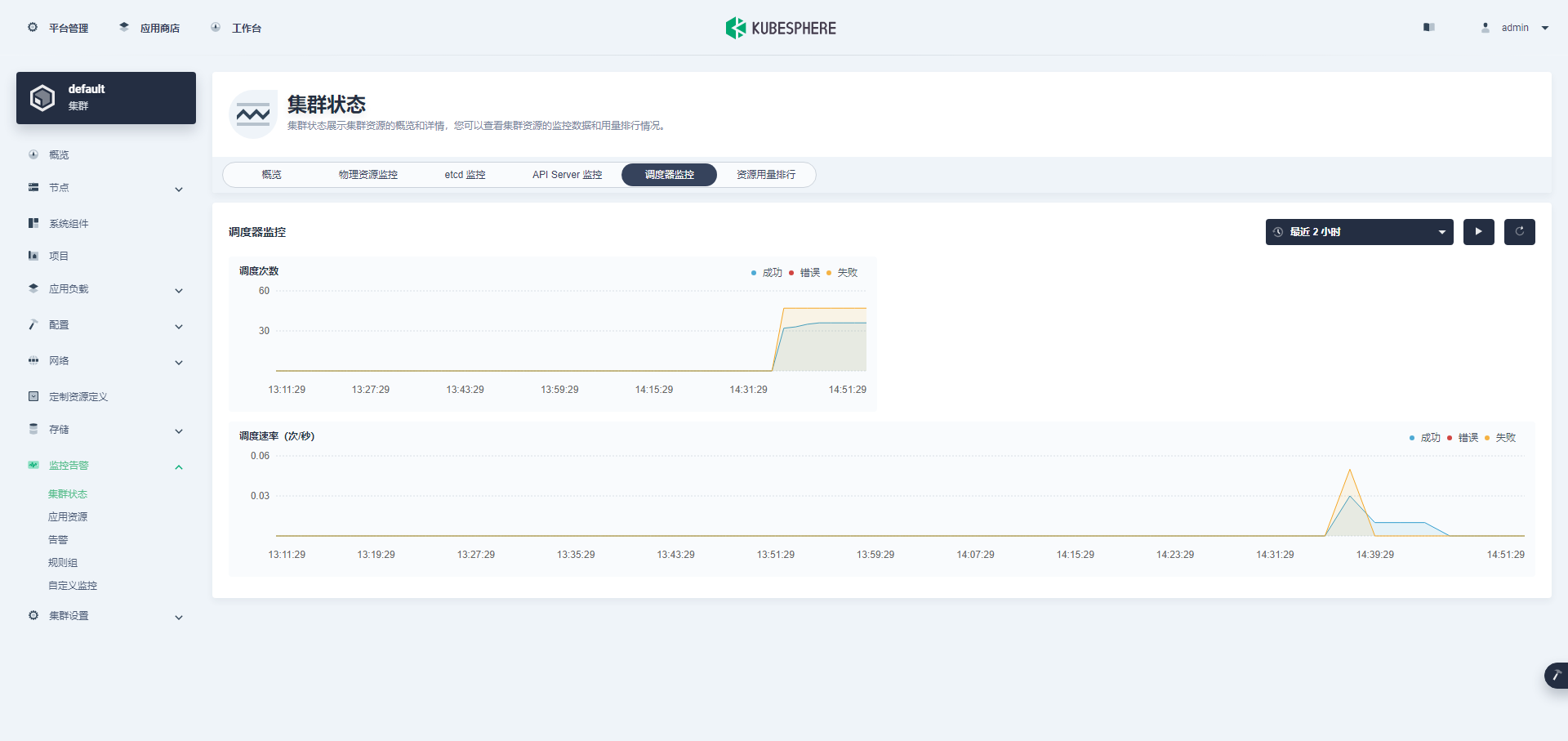

最后看一组监控图表来结束我们的图形验证(ETCD 监控在上文已展示)。

Kubectl 命令行验证集群状态

本小节只是简单的看了一下基本状态,并不全面,更多的细节大家自己体验探索吧。

在 master-1 节点运行 kubectl 命令获取 Kubernetes 集群上的可用节点列表。

kubectl get nodes -o wide

在输出结果中可以看到,当前的 Kubernetes 集群有三个可用节点、节点的内部 IP、节点角色、节点的 Kubernetes 版本号、容器运行时及版本号、操作系统类型及内核版本等信息。

[root@ks-master-1 kubekey]# kubectl get nodes -o wide

、NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

ks-master-1 Ready control-plane 4h54m v1.26.5 192.168.9.61 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

ks-master-2 Ready control-plane 4h53m v1.26.5 192.168.9.62 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

ks-master-3 Ready control-plane 4h53m v1.26.5 192.168.9.63 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

ks-worker-1 Ready worker 4h53m v1.26.5 192.168.9.65 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

ks-worker-2 Ready worker 4h53m v1.26.5 192.168.9.66 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

ks-worker-3 Ready worker 4h53m v1.26.5 192.168.9.67 <none> CentOS Linux 7 (Core) 5.4.251-1.el7.elrepo.x86_64 containerd://1.6.4

- 输入以下命令获取在 Kubernetes 集群上运行的 Pod 列表,按工作负载在 NODE 上的分布排序。

kubectl get pods -o wide -A | sort -k 8

输入以下命令获取在 Kubernetes 集群节点上已经下载的 Image 列表。

crictl images ls

在 Master 和 Worker 节点分别执行,输出结果如下:

# Master 节点

[root@ks-master-1 kubekey]# crictl images ls

IMAGE TAG IMAGE ID SIZE

registry.cn-beijing.aliyuncs.com/kubesphereio/cni v3.23.2 a87d3f6f1b8fd 111MB

registry.cn-beijing.aliyuncs.com/kubesphereio/coredns 1.9.3 5185b96f0becf 14.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/fluent-bit v1.9.4 1bf001b24caa8 27.2MB

registry.cn-beijing.aliyuncs.com/kubesphereio/k8s-dns-node-cache 1.15.12 5340ba194ec91 42.1MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-apiserver v1.26.5 25c2ecde661fc 35.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-controller-manager v1.26.5 a7403c147a516 32.4MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-controllers v3.23.2 ec95788d0f725 56.4MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-proxy v1.26.5 08440588500d7 21.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-rbac-proxy v0.11.0 29589495df8d9 19.2MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-scheduler v1.26.5 200132c1d91ab 17.7MB

registry.cn-beijing.aliyuncs.com/kubesphereio/node-exporter v1.3.1 1dbe0e9319764 10.3MB

registry.cn-beijing.aliyuncs.com/kubesphereio/node v3.23.2 a3447b26d32c7 77.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/pause 3.8 4873874c08efc 310kB

registry.cn-beijing.aliyuncs.com/kubesphereio/pause 3.9 e6f1816883972 321kB

registry.cn-beijing.aliyuncs.com/kubesphereio/pod2daemon-flexvol v3.23.2 b21e2d7408a79 8.67MB

registry.cn-beijing.aliyuncs.com/kubesphereio/scope 1.13.0 ca6176be9738f 30.7MB

# Worker 节点

[root@ks-worker-1 ~]# crictl images ls

IMAGE TAG IMAGE ID SIZE

docker.io/library/busybox latest a416a98b71e22 2.22MB

registry.cn-beijing.aliyuncs.com/kubesphereio/alertmanager v0.23.0 ba2b418f427c0 26.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/cni v3.23.2 a87d3f6f1b8fd 111MB

registry.cn-beijing.aliyuncs.com/kubesphereio/configmap-reload v0.7.1 1f605d278292f 3.94MB

registry.cn-beijing.aliyuncs.com/kubesphereio/coredns 1.9.3 5185b96f0becf 14.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/devops-tools ks-v3.4.0 4680d8a8f48f1 31.6MB

registry.cn-beijing.aliyuncs.com/kubesphereio/fluent-bit v1.9.4 1bf001b24caa8 27.2MB

registry.cn-beijing.aliyuncs.com/kubesphereio/haproxy 2.3 0ea9253dad7c0 38.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-operator 1.29 8360f4a8cd334 108MB

registry.cn-beijing.aliyuncs.com/kubesphereio/k8s-dns-node-cache 1.15.12 5340ba194ec91 42.1MB

registry.cn-beijing.aliyuncs.com/kubesphereio/ks-apiserver v3.4.0 e3ea630cb8056 65.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/ks-console v3.4.0 2928b06e49872 38.9MB

registry.cn-beijing.aliyuncs.com/kubesphereio/ks-controller-manager v3.4.0 0999c21fe5ee2 50.3MB

registry.cn-beijing.aliyuncs.com/kubesphereio/ks-jenkins v3.4.0-2.319.3-1 07bccb8c9e2c2 584MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-controllers v3.23.2 ec95788d0f725 56.4MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-events-ruler v0.6.0 002d69075c5b0 27.1MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-proxy v1.26.5 08440588500d7 21.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-rbac-proxy v0.11.0 29589495df8d9 19.2MB

registry.cn-beijing.aliyuncs.com/kubesphereio/kube-state-metrics v2.6.0 ec6e2d871c544 12MB

registry.cn-beijing.aliyuncs.com/kubesphereio/linux-utils 3.3.0 e88cfb3a763b9 26.9MB

registry.cn-beijing.aliyuncs.com/kubesphereio/mc RELEASE.2019-08-07T23-14-43Z 2def265e6001b 9.32MB

registry.cn-beijing.aliyuncs.com/kubesphereio/metrics-server v0.4.2 17c225a562d97 25.2MB

registry.cn-beijing.aliyuncs.com/kubesphereio/node-exporter v1.3.1 1dbe0e9319764 10.3MB

registry.cn-beijing.aliyuncs.com/kubesphereio/node v3.23.2 a3447b26d32c7 77.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/notification-manager-operator v2.3.0 7ffe334bf3772 19.3MB

registry.cn-beijing.aliyuncs.com/kubesphereio/notification-manager v2.3.0 2c35ec9a2c185 21.6MB

registry.cn-beijing.aliyuncs.com/kubesphereio/notification-tenant-sidecar v3.2.0 4b47c43ec6ab6 14.7MB

registry.cn-beijing.aliyuncs.com/kubesphereio/openpitrix-jobs v3.3.2 f4ace17a95725 16.8MB

registry.cn-beijing.aliyuncs.com/kubesphereio/opensearch 2.6.0 feebbf30f81c1 818MB

registry.cn-beijing.aliyuncs.com/kubesphereio/pause 3.8 4873874c08efc 310kB

registry.cn-beijing.aliyuncs.com/kubesphereio/pod2daemon-flexvol v3.23.2 b21e2d7408a79 8.67MB

registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus-config-reloader v0.55.1 7c63de88523a9 4.84MB

registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus-operator v0.55.1 b30c215b787f5 14.3MB

registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus v2.39.1 6b9895947e9e4 88.5MB

registry.cn-beijing.aliyuncs.com/kubesphereio/s2ioperator v3.2.1 dd77b631d930d 13MB

registry.cn-beijing.aliyuncs.com/kubesphereio/scope 1.13.0 ca6176be9738f 30.7MB

registry.cn-beijing.aliyuncs.com/kubesphereio/snapshot-controller v4.0.0 f1d8a00ae690f 19MB

registry.cn-beijing.aliyuncs.com/kubesphereio/thanos v0.31.0 765df1804bae1 40MB

Worker 节点的 Image 较多,因为该节点运行了除 K8s 核心服务之外的其他服务。

至此,我们已经部署完成了具有 3 个 Master 节点和 3 个 Worker 节点的高可用的 Kubernetes 集群和 KubeSphere。我们还通过 KubeSphere 管理控制台和命令行界面查看了集群的状态。

常见问题

问题 1

[root@ks-master-1 ~]# yum -y --enablerepo=elrepo-kernel install kernel-lt kernel-lt-tools kernel-lt-tools-libs

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* elrepo: hkg.mirror.rackspace.com

* elrepo-kernel: hkg.mirror.rackspace.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

elrepo | 3.0 kB 00:00:00

elrepo/primary_db | 350 kB 00:00:00

Resolving Dependencies

--> Running transaction check

---> Package kernel-lt.x86_64 0:5.4.251-1.el7.elrepo will be installed

---> Package kernel-lt-tools.x86_64 0:5.4.251-1.el7.elrepo will be installed

---> Package kernel-lt-tools-libs.x86_64 0:5.4.251-1.el7.elrepo will be installed

--> Processing Conflict: kernel-lt-tools-5.4.251-1.el7.elrepo.x86_64 conflicts kernel-tools < 5.4.251-1.el7.elrepo

--> Restarting Dependency Resolution with new changes.

--> Running transaction check

---> Package kernel-tools.x86_64 0:3.10.0-1160.71.1.el7 will be updated

---> Package kernel-tools.x86_64 0:3.10.0-1160.92.1.el7 will be an update

--> Processing Dependency: kernel-tools-libs = 3.10.0-1160.92.1.el7 for package: kernel-tools-3.10.0-1160.92.1.el7.x86_64

--> Running transaction check

---> Package kernel-tools-libs.x86_64 0:3.10.0-1160.71.1.el7 will be updated

---> Package kernel-tools-libs.x86_64 0:3.10.0-1160.92.1.el7 will be an update

--> Processing Conflict: kernel-lt-tools-libs-5.4.251-1.el7.elrepo.x86_64 conflicts kernel-tools-libs < 5.4.251-1.el7.elrepo

--> Processing Conflict: kernel-lt-tools-5.4.251-1.el7.elrepo.x86_64 conflicts kernel-tools < 5.4.251-1.el7.elrepo

--> Finished Dependency Resolution

Error: kernel-lt-tools-libs conflicts with kernel-tools-libs-3.10.0-1160.92.1.el7.x86_64

Error: kernel-lt-tools conflicts with kernel-tools-3.10.0-1160.92.1.el7.x86_64

You could try using --skip-broken to work around the problem

You could try running: rpm -Va --nofiles --nodigest

# 卸载旧版本的 kernel-tools 相关软件包

yum remove kernel-tools-3.10.0-1160.71.1.el7.x86_64 kernel-tools-libs-3.10.0-1160.71.1.el7.x86_64

# 安装新版本的 kernel-tools 相关软件包

yum --enablerepo=elrepo-kernel install kernel-lt-tools kernel-lt-tools-libs

问题 2

# 执行 create cluster 时报错

downloading amd64 helm v3.9.0 ...

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 13.3M 0 111k 0 0 978 0 3:57:46 0:01:56 3:55:50 0

curl: (56) TCP connection reset by peer

10:17:32 CST [WARN] Having a problem with accessing https://storage.googleapis.com? You can try again after setting environment 'export KKZONE=cn'

10:17:32 CST message: [LocalHost]

Failed to download helm binary: curl -L -o /root/kubekey/kubekey/helm/v3.9.0/amd64/helm-v3.9.0-linux-amd64.tar.gz https://get.helm.sh/helm-v3.9.0-linux-amd64.tar.gz && cd /root/kubekey/kubekey/helm/v3.9.0/amd64 && tar -zxf helm-v3.9.0-linux-amd64.tar.gz && mv linux-amd64/helm . && rm -rf *linux-amd64* error: exit status 56

10:17:32 CST failed: [LocalHost]

error: Pipeline[CreateClusterPipeline] execute failed: Module[NodeBinariesModule] exec failed:

failed: [LocalHost] [DownloadBinaries] exec failed after 1 retries: Failed to download helm binary: curl -L -o /root/kubekey/kubekey/helm/v3.9.0/amd64/helm-v3.9.0-linux-amd64.tar.gz https://get.helm.sh/helm-v3.9.0-linux-amd64.tar.gz && cd /root/kubekey/kubekey/helm/v3.9.0/amd64 && tar -zxf helm-v3.9.0-linux-amd64.tar.gz && mv linux-amd64/helm . && rm -rf *linux-amd64* error: exit status 56

# 执行集群部署命令之前执行

export KKZONE=cn

问题 3

11:10:33 CST [InitKubernetesModule] Init cluster using kubeadm

11:10:34 CST stdout: [ks-master-1]

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher

11:10:34 CST stdout: [ks-master-1]

[preflight] Running pre-flight checks

W0728 11:10:34.057704 5978 removeetcdmember.go:106] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] Deleted contents of the etcd data directory: /var/lib/etcd

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

W0728 11:10:34.062055 5978 cleanupnode.go:134] [reset] Failed to evaluate the "/var/lib/kubelet" directory. Skipping its unmount and cleanup: lstat /var/lib/kubelet: no such file or directory

[reset] Deleting contents of directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.d

The reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.

If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.

The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

11:10:34 CST message: [ks-master-1]

init kubernetes cluster failed: Failed to exec command: sudo -E /bin/bash -c "/usr/local/bin/kubeadm init --config=/etc/kubernetes/kubeadm-config.yaml --ignore-preflight-errors=FileExisting-crictl,ImagePull"

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher: Process exited with status 1

11:10:34 CST retry: [ks-master-1]

11:10:39 CST stdout: [ks-master-1]

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher

11:10:39 CST stdout: [ks-master-1]

[preflight] Running pre-flight checks

W0728 11:10:39.194589 6014 removeetcdmember.go:106] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

W0728 11:10:39.198371 6014 cleanupnode.go:134] [reset] Failed to evaluate the "/var/lib/kubelet" directory. Skipping its unmount and cleanup: lstat /var/lib/kubelet: no such file or directory

[reset] Deleting contents of directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.d

The reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.

If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.

The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

11:10:39 CST message: [ks-master-1]

init kubernetes cluster failed: Failed to exec command: sudo -E /bin/bash -c "/usr/local/bin/kubeadm init --config=/etc/kubernetes/kubeadm-config.yaml --ignore-preflight-errors=FileExisting-crictl,ImagePull"

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher: Process exited with status 1

11:10:39 CST retry: [ks-master-1]

11:10:44 CST stdout: [ks-master-1]

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher

11:10:44 CST stdout: [ks-master-1]

[preflight] Running pre-flight checks

W0728 11:10:44.329364 6049 removeetcdmember.go:106] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

W0728 11:10:44.333092 6049 cleanupnode.go:134] [reset] Failed to evaluate the "/var/lib/kubelet" directory. Skipping its unmount and cleanup: lstat /var/lib/kubelet: no such file or directory

[reset] Deleting contents of directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.d

The reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.

If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.

The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

11:10:44 CST message: [ks-master-1]

init kubernetes cluster failed: Failed to exec command: sudo -E /bin/bash -c "/usr/local/bin/kubeadm init --config=/etc/kubernetes/kubeadm-config.yaml --ignore-preflight-errors=FileExisting-crictl,ImagePull"

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher: Process exited with status 1

11:10:44 CST skipped: [ks-master-3]

11:10:44 CST skipped: [ks-master-2]

11:10:44 CST failed: [ks-master-1]

error: Pipeline[CreateClusterPipeline] execute failed: Module[InitKubernetesModule] exec failed:

failed: [ks-master-1] [KubeadmInit] exec failed after 3 retries: init kubernetes cluster failed: Failed to exec command: sudo -E /bin/bash -c "/usr/local/bin/kubeadm init --config=/etc/kubernetes/kubeadm-config.yaml --ignore-preflight-errors=FileExisting-crictl,ImagePull"

your configuration file uses an old API spec: "kubeadm.k8s.io/v1beta2". Please use kubeadm v1.22 instead and run 'kubeadm config migrate --old-config old.yaml --new-config new.yaml', which will write the new, similar spec using a newer API version.

To see the stack trace of this error execute with --v=5 or higher: Process exited with status 1

啥也别说了,干净的环境执行了两遍,报错一致。按提示手工执行 config migreate 依然报错。

Kubekey 部署 v1.27.2 必然存在问题,有 Bug。果断换 v1.26.5,换了以后再部署,一次成功。

问题 4

12:42:11 CST [InitRegistryModule] Fetch registry certs

12:42:11 CST success: [ks-worker-1]

12:42:11 CST [InitRegistryModule] Generate registry Certs

[certs] Generating "ca" certificate and key

12:42:12 CST message: [LocalHost]

unable to sign certificate: must specify a CommonName

12:42:12 CST failed: [LocalHost]

error: Pipeline[InitRegistryPipeline] execute failed: Module[InitRegistryModule] exec failed:

failed: [LocalHost] [GenerateRegistryCerts] exec failed after 1 retries: unable to sign certificate: must specify a CommonName

留个尾巴,这个问题没去解决。

结束语

本文主要部署演示了在 CentOS 7.9 上 服务器上利用 KubeKey v3.0.9 自动化部署 KubeSphere v3.4.0 和 Kubernetes v1.26.5 高可用集群的详细过程。

部署完成后,我们还利用 KubeSphere 管理控制台和 kubectl 命令行,查看并验证了 KubeSphere 和 Kubernetes 集群的状态。

本来计划是体验 Kubernetes v1.27.2,无奈部署一直失败,因此,临时换成 v1.26.5。但是,为了体现初衷,文档标题中的 Kubernetes 版本还是 v1.27。

说句实话,我自己的安装体验过程还算顺利,除了版本不兼容一个问题,并没有遇到其他人爆料的所谓的坑,但是也可能跟我浅尝辄止只看到了表面,没有深入测试有关。

但是,按以往的经验来看,新的版本必然会存在一些问题,而且在 KubeSphere 的交流群,也确实有很多尝鲜过的人反应 v3.4.0 存在的一些问题。

因此,不建议各位在生产环境使用 v3.4.0,如果在升级后遇到问题,可以在官方论坛交流,如果遇到 bug,可以去提交 issue 反馈。

文本发布的多少有些仓促了,结尾也不是很完美,欢迎各位读者联系我指正文章中出现的错误。另外,配对的视频链接:https://www.bilibili.com/video/BV1Fh4y1C76p/。